- The AI Revolution is fueled by specialized hardware, moving beyond traditional CPUs.

- GPUs (Graphics Processing Units) are key, excelling at parallel processing for Deep Learning.

- TPUs (Tensor Processing Units) are custom-built by Google specifically for AI workloads.

- These chips provide the immense computational power needed for training complex AI models.

- Understanding these “powerful chips” is crucial for appreciating AI’s capabilities and scalability.

Table of Contents

Introduction

When we talk about the incredible advancements in “AI” today—from sophisticated image recognition to powerful Large Language Models (LLMs)—it’s easy to focus solely on the clever algorithms and vast data sets. Traditional Central Processing Units (CPUs) that power our everyday computers are generalists; they’re great at many things but not optimized for the specific, highly parallel computations that Machine Learning and Deep Learning demand. This is where specialized chips like GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units) come into play. Understanding “What Hardware’s are Driving the AI Revolution” is crucial to grasping AI’s current capabilities, its scalability, and its future potential, especially as AI expands its reach into resource-constrained environments that require highly efficient processing.

Core Concepts

The “AI Revolution” is fundamentally a computational revolution. Specialized hardware provides the immense processing power needed to train and run complex AI models.

Here are 5 Powerful Chips Driving the AI Revolution:

- CPUs (Central Processing Units): The Generalists

- Definition: The traditional “brain” of a computer, designed for general-purpose computing. They excel at sequential tasks and complex logic.

- Role in AI: While not ideal for heavy Deep Learning training, CPUs are still vital for orchestrating AI workloads, managing data, and running smaller AI models or inference tasks, especially in Edge AI devices where dedicated accelerators might not be present.

- Analogy: A CPU is like a highly intelligent, versatile general manager who can handle many different types of tasks, but prefers to do them one at a time or in small batches.

- GPUs (Graphics Processing Units): The Parallel Powerhouses

- Definition: Originally designed to accelerate graphics rendering by performing many simple calculations simultaneously. This parallel processing capability proved to be perfectly suited for the matrix multiplications and vector operations at the heart of Deep Learning.

- Role in AI: GPUs are the workhorses for training most large Machine Learning and Deep Learning models. They allow AI researchers to process massive data sets and train complex neural networks in days or weeks, rather than months or years.

- Analogy: A GPU is like a massive team of diligent workers, each capable of doing a simple calculation, but all working simultaneously on different parts of a huge problem. This is ideal for tasks like training a Deep Learning model.

- TPUs (Tensor Processing Units): Google’s Custom AI Accelerators

- Definition: Custom-designed Application-Specific Integrated Circuits (ASICs) developed by Google specifically for accelerating Machine Learning workloads, particularly those involving Google’s TensorFlow framework. They are highly optimized for the mathematical operations (tensor computations) common in Deep Learning.

- Role in AI: TPUs are designed to deliver even higher performance and energy efficiency than GPUs for certain types of Deep Learning tasks, especially within Google’s cloud infrastructure. They are crucial for training Google’s own Large Language Models (LLMs) and other advanced AI.

- Analogy: A TPU is like a specialized factory assembly line custom-built to produce one specific product (AI calculations) with maximum efficiency and speed.

- NPUs (Neural Processing Units) / AI Accelerators: Edge Intelligence

- Definition: Specialized processors or co-processors designed to efficiently run AI inference (applying a trained model) on Edge AI devices like smartphones, smart cameras, and IoT sensors. They are optimized for low power consumption and real-time performance.

- Role in AI: NPUs bring AI capabilities directly to devices, enabling features like on-device facial recognition, voice assistants, and real-time object detection without constantly sending data to the cloud. They are key for privacy, latency, and autonomy.

- Analogy: An NPU is like a compact, battery-powered calculator designed to quickly solve one specific type of math problem (AI inference) on the go.

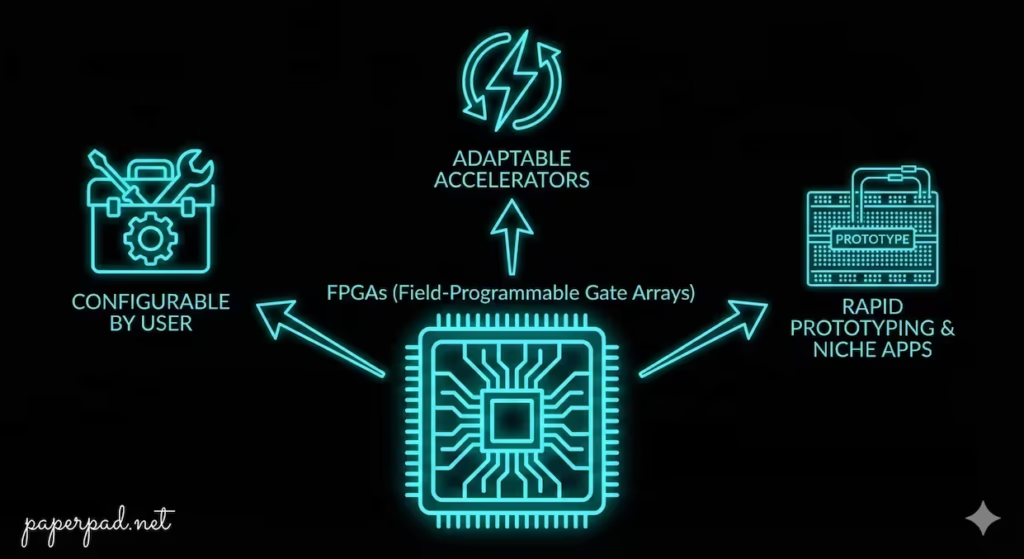

- FPGA (Field-Programmable Gate Arrays): The Adaptable Accelerators

- Definition: Integrated circuits that can be configured by a user after manufacturing. They offer a balance between the flexibility of CPUs and the speed of ASICs, allowing custom hardware acceleration for specific AI algorithms.

- Role in AI: FPGAs are used in niche AI applications where customizability and energy efficiency are critical, especially for specific inference workloads or for rapid prototyping of new AI architectures.

- Analogy: An FPGA is like a LEGO set that can be reconfigured to build many different specialized machines for AI tasks, adapting to new needs without having to build a new factory.

These diverse hardware solutions form the backbone of the AI architecture, each playing a distinct role in the complex workflow of AI development and deployment.

How It Works

The workflow of AI development heavily dictates which hardware is used at each stage.

- Data Preparation (CPU-intensive):

- Before Machine Learning can begin, massive data sets need to be collected, cleaned, and pre-processed. This stage often involves complex data manipulation and traditional programming, which is typically handled by CPUs.

- Model Training (GPU/TPU-intensive):

- This is where the specialized hardware shines. Training a Deep Learning neural network involves performing billions or trillions of matrix multiplications and other linear algebra operations.

- GPUs (or TPUs in Google’s cloud) excel at this. They can execute these operations in parallel across thousands of their processing cores, significantly accelerating the learning process. This stage is highly resource-intensive and often takes place in Cloud Computing environments.

- Model Optimization & Compression (CPU/Specialized Software):

- After training, the large AI model might be optimized and compressed to run more efficiently. This often involves software tools running on CPUs or specialized compilers for NPUs.

- Model Deployment & Inference (CPU/GPU/NPU/FPGA):

- Once the model is trained and optimized, it needs to be deployed to make predictions on new data.

- For large-scale inference (e.g., serving millions of users), GPUs or TPUs in the cloud are used to handle the high throughput.

- For Edge AI devices (smartphones, cameras, drones), specialized NPUs or FPGAs are employed to perform inference with low power consumption and minimal latency, enabling on-device autonomy. CPUs still manage the overall device operation.

- Monitoring & Management (CPU-intensive):

- The overall workflow of managing, monitoring, and updating AI models (whether in the cloud or at the edge) is typically orchestrated by CPUs.

This dynamic pipeline ensures that the right hardware is utilized for the right task, optimizing cost, performance, and efficiency.

Real-World Examples

These specialized chips are the unsung heroes behind many of AI’s biggest breakthroughs.

- Training a Large Language Model (Cloud GPUs/TPUs):

- Scenario: Developing a new Large Language Model (LLM) like GPT-4 or similar.

- How it works: This requires processing text data sets containing hundreds of billions or even trillions of words. Training such a model takes thousands of GPUs or TPUs working in parallel for months. Without this immense, specialized computational power, the sheer scale of these models would be impossible to achieve. This is a prime example of Cloud Computing’s role in AI’s power.

- Real-time Object Detection on a Smartphone (NPUs):

- Scenario: Your smartphone’s camera app can instantly identify objects, people, or text in its view, or perform facial recognition for unlocking.

- How it works: This is powered by an NPU (Neural Processing Unit) embedded directly in your phone’s chip. The AI model for object detection, previously trained on GPUs in the cloud, is compressed and loaded onto the NPU. This allows the phone to perform inference at high speed with low power consumption, providing real-time AI features directly on the device with high privacy and minimal latency.

- Data Center AI Inference for Image Search (TPUs/GPUs):

- Scenario: When you perform an image search on a platform like Google Photos, and it instantly identifies objects, locations, or people in your vast photo library.

- How it works: This massive inference workload is handled by farms of TPUs (for Google) or GPUs in their data centers. When you upload photos, Computer Vision models quickly analyze them, tagging them with relevant keywords. This requires specialized hardware to manage the sheer volume and speed of processing needed for millions of users.

Benefits, Trade-offs, and Risks

Benefits

- Unprecedented Speed: Accelerates Deep Learning training and inference by orders of magnitude compared to CPUs.

- Scalability: Enables the development and deployment of increasingly complex and large AI models.

- Energy Efficiency: Specialized chips like TPUs and NPUs are highly optimized for AI workloads, consuming less power than general-purpose CPUs for the same task.

- New Capabilities: Makes possible real-time AI applications, Edge AI, and the development of Generative AI and LLMs.

- Cost Reduction (for specific tasks): While initial investment can be high, the efficiency gain often translates to lower operational cost for AI workloads.

Trade-offs/Limitations

- Specialization: GPUs and TPUs are highly specialized. While powerful for AI, they are less versatile than CPUs for general computing tasks.

- Cost: High-end GPUs and TPUs can be expensive, both to acquire and to power, impacting ROI for smaller projects.

- Programming Complexity: Optimizing AI algorithms to run efficiently on parallel hardware like GPUs can be more complex than traditional CPU programming.

- Rapid Obsolescence: The pace of innovation in AI hardware is extremely fast, leading to rapid obsolescence of older generations.

Risks & Guardrails

- Supply Chain Dependencies: Reliance on a few manufacturers (e.g., NVIDIA for GPUs) creates potential supply chain vulnerabilities.

- Environmental Impact: The immense power consumption of AI hardware, especially during training, raises environmental concerns.

- Ethical Implications of Power: The concentration of such powerful computing resources in a few hands raises ethical questions about access, control, and potential misuse of AI.

- Guardrail: Promote open standards and diverse hardware ecosystems, invest in energy-efficient AI research, and implement strict governance and ethical guardrails for AI development.

What to Do Next / Practical Guidance

Understanding AI hardware helps you make informed decisions about your AI journey.

- Now (Recognize the Role):

- Appreciate the Engine: Understand that without these specialized chips, much of the AI we see today wouldn’t be possible.

- Identify Hardware in Use: When you hear about an AI application, consider what kind of hardware is likely powering it (e.g., cloud GPUs for training, edge NPUs for on-device inference).

- Metrics to Watch: How fast is a new AI model trained? What kind of hardware made that possible?

- Next (Optimize Your AI Workflow):

- Choose Wisely: For AI development, select the hardware (or cloud service) that best fits your specific objective, constraints, and workflow (e.g., GPUs for training, NPUs for edge deployment).

- Optimize Models: Work to optimize your AI models for the target hardware to maximize performance and minimize cost and power consumption.

- Cloud vs. Edge: Understand the trade-offs between Cloud Computing (for massive training) and Edge AI (for low-latency, private inference) and choose appropriately.

- Metrics to Watch: Training time, inference latency, power consumption, and overall cost of your AI solution.

- Later (Innovate & Govern):

- Stay Updated: Keep abreast of new developments in AI hardware, as innovations can significantly impact AI capabilities.

- Advocate for Efficiency: Support research and development into more energy-efficient AI architectures and hardware.

- Ethical Considerations: Understand the ethical implications of powerful AI hardware, particularly concerning access, privacy, and potential misuse.

- Metrics to Watch: Long-term ROI of hardware investments, environmental impact, and adherence to ethical guidelines.

Common Misconceptions

- “CPUs are obsolete for AI”: CPUs are still essential for orchestration, data management, and many inference tasks, especially on Edge AI devices.

- “All AI hardware is the same”: There’s a wide variety, each optimized for different AI workloads (training vs. inference, cloud vs. edge).

- “More cores always means better performance”: It depends on the task. GPUs have many simple cores for parallel tasks; CPUs have fewer, more powerful cores for sequential tasks.

- “AI hardware is only for big tech companies”: Cloud providers make this powerful hardware accessible to everyone through services.

- “The software (algorithms) is the only important part”: The AI Revolution is a co-evolution of algorithms, data sets, and specialized hardware.

Conclusion

The AI Revolution is not just about intelligent algorithms and vast data sets; it’s profoundly driven by specialized AI Hardware. From the parallel processing power of GPUs and the custom efficiency of TPUs to the edge intelligence of NPUs and the adaptability of FPGAs, these “powerful chips” provide the computational muscle necessary for modern AI. Understanding “What Hardware’s are Driving the AI Revolution” is crucial for appreciating AI’s scalability, its capabilities, and its constraints. This diverse architecture enables everything from training massive Large Language Models in the cloud to performing real-time object detection on a smartphone, continuously pushing the boundaries of what Artificial Intelligence can achieve.