- Generative AI refers to AI models that can create new, original content (text, images, audio, video) rather than just analyzing or predicting from existing data.

- It’s a significant leap beyond traditional AI, allowing machines to be “creative.”

- Key technologies include Large Language Models (LLMs) and generative adversarial networks (GANs).

- Understanding “how machines create” is crucial for appreciating AI’s evolving capabilities and potential impact.

- Generative AI is transforming industries, but also introduces new risks and ethical constraints.

Table of Contents

Introduction

For decades, Artificial Intelligence (AI) was primarily about prediction and analysis. AI systems could recognize patterns, classify data, or forecast trends. But a new, transformative era of AI has emerged: Generative AI. This isn’t about machines just predicting what comes next; it’s about machines actively creating entirely new, original content that can be indistinguishable from human-made work. From writing compelling stories and generating realistic images to composing music and designing new molecules, Generative AI Explained: 5 Ways AI Creates New Content marks a profound shift in what we expect from intelligent systems.

Core Concepts

Generative AI refers to a class of AI models that are designed to produce novel outputs. Unlike discriminative AI, which classifies or predicts based on existing data, generative AI generates data.

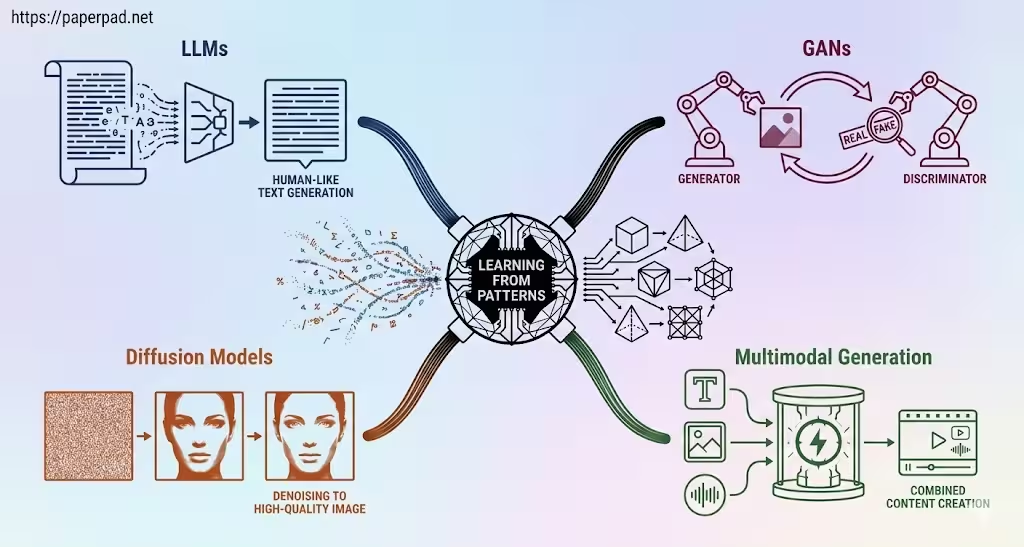

Here are 5 Ways AI Creates New Content:

- Learning from Patterns (The Underlying Principle):

- Definition: At its core, generative AI learns the underlying patterns, structures, and distributions within a vast data set (e.g., millions of images, billions of text passages). It doesn’t copy; it learns the “rules” of what constitutes, say, a realistic human face or a coherent sentence.

- Analogy: Imagine an aspiring artist who studies thousands of paintings from various styles. They don’t copy any single painting, but they learn the principles of perspective, color theory, brushstrokes, and composition. Generative AI “learns” in a similar way, internalizing the creative “rules.”

- Large Language Models (LLMs) for Text Generation:

- Definition: These are Deep Learning models trained on colossal amounts of text data (often the entire internet). They learn the statistical relationships between words and phrases, allowing them to generate human-like text, answer questions, summarize, translate, and even write code.

- Analogy: An LLM is like a super-prolific author who has read every book ever written. When given a prompt, it can generate new text that sounds like it came from a human, because it has learned the patterns of human language so thoroughly.

- Generative Adversarial Networks (GANs) for Image/Audio/Video:

- Definition: GANs consist of two competing neural networks: a “generator” that creates new content (e.g., fake images) and a “discriminator” that tries to distinguish real content from the generator’s fakes. They train each other, with the generator getting better at fooling the discriminator, and the discriminator getting better at spotting fakes.

- Analogy: Imagine a counterfeiter (generator) trying to print perfect fake money, and a detective (discriminator) trying to spot the fakes. Each gets better at their job by learning from the other, until the counterfeiter can produce nearly indistinguishable fakes.

- Diffusion Models for High-Quality Image/Video:

- Definition: These models learn to generate data by iteratively denoising an input. They start with random noise and gradually transform it into a coherent image (or other data type) by reversing a diffusion process. They have achieved remarkable results in generating highly realistic and diverse images.

- Analogy: Imagine starting with a blurry, static-filled image. A diffusion model is like an expert restorer who knows how to gradually remove the noise and reveal a perfect, clear image underneath, having learned what “clear” images look like.

- Multimodal Generation (Combining Different Types of Content):

- Definition: Advanced generative AI can combine different forms of content. For example, generating an image from a text description (text-to-image), or generating video from text and images. This integrates capabilities from different generative models.

- Analogy: It’s like an AI that can read a script (text), then direct a movie (video), and compose the soundtrack (audio), all from its learned understanding of how these different creative elements combine.

These generative capabilities are pushing the boundaries of AI autonomy and creative output, operating within a complex architecture and sophisticated workflow.

How It Works

The workflow for generative AI typically involves massive training and then creative inference.

- Massive Data Collection:

- Vast data sets of diverse content (e.g., billions of text passages for LLMs, millions of images for GANs/Diffusion models) are gathered. The quality and representativeness of this data are paramount.

- Model Architecture Design:

- A suitable Deep Learning architecture (e.g., Transformer for LLMs, GANs, Diffusion models) is chosen and designed. These are often extremely large neural networks with billions of parameters.

- Intensive Training:

- The model is trained on the massive data set using powerful GPUs or TPUs in Cloud Computing environments. This process can take weeks or months and costs millions of dollars. The model learns the underlying patterns and relationships within the data.

- Prompt/Input:

- Once trained, the user provides a “prompt” or input (e.g., a text description, a partial image, a musical theme) to guide the generation process.

- Generation (Inference):

- The generative AI model processes the prompt and, based on its learned patterns, creates new content. This is the inference phase, where the model applies what it learned to generate something novel.

- Refinement & Iteration:

- Users can often refine the output by providing further prompts or adjustments. The process can be iterative, allowing for collaborative creation.

- Evaluation & Feedback:

- The quality and originality of the generated content are evaluated (often by humans). Feedback loops are crucial for improving future models and fine-tuning existing ones.

This pipeline demonstrates how AI moves from pattern recognition to content creation, but it still requires careful human guidance and guardrails.

Real-World Examples

Generative AI is already transforming many fields.

- Content Creation & Marketing:

- Scenario: A marketing team needs to quickly generate multiple variations of ad copy or social media posts.

- How it works: An LLM can take a few bullet points about a product and generate several creative and engaging ad slogans, product descriptions, or blog post outlines. This allows for rapid content iteration and personalization at scale, improving ROI.

- Emerging Market Context: For businesses in emerging markets with limited marketing budgets, generative AI can provide accessible tools for creating localized content, translating marketing materials, and reaching diverse customer segments more efficiently.

- Image & Art Generation:

- Scenario: A graphic designer needs unique images for a project, or an artist wants to explore new creative styles.

- How it works: Tools based on GANs or Diffusion Models can generate photorealistic images from simple text descriptions (e.g., “a futuristic city at sunset, cyberpunk style”) or transform existing images into different artistic styles. This democratizes image creation and accelerates creative workflows.

- Emerging Market Context: Artists and designers in regions with limited access to expensive stock photo libraries or traditional artistic tools can use generative AI to create unique visual content for their projects, fostering local creativity and economic opportunities.

- Code Generation & Software Development:

- Scenario: A software developer needs help writing boilerplate code, debugging, or translating code between languages.

- How it works: LLMs trained on vast code repositories can generate code snippets, suggest bug fixes, explain complex functions, or even create entire functions based on natural language prompts. This significantly boosts developer productivity and reduces development cost.

- Emerging Market Context: Generative AI for code can lower the barrier to entry for aspiring developers, providing intelligent coding assistants that help them learn faster and contribute to software projects, even with less formal training.

- Drug Discovery & Materials Science:

- Scenario: Scientists are searching for new molecules with specific properties for medicine or materials.

- How it works: Generative AI models can “design” novel molecular structures that are predicted to have desired characteristics. Instead of exhaustively testing millions of compounds, AI suggests promising candidates, drastically speeding up the discovery process and solving complex optimization problems. This pushes the boundaries of scientific innovation.

Benefits, Trade-offs, and Risks

Benefits

- Unprecedented Creativity: Enables machines to generate novel content, unlocking new possibilities in art, design, and science.

- Increased Productivity: Automates content creation, code generation, and design tasks, boosting human efficiency.

- Personalization at Scale: Allows for highly customized content generation for individual users or specific contexts.

- Democratization of Creation: Lowers the barrier to entry for creative and technical tasks, making powerful tools accessible.

- Problem Solving: Can generate solutions to complex problems (e.g., drug discovery, material design) that are intractable for humans.

Trade-offs/Limitations

- Data Dependency: Requires massive, high-quality, and diverse data sets for training, which can be expensive and time-consuming to acquire.

- Computational Cost: Training and running large generative models are extremely resource-intensive, requiring powerful GPUs/TPUs and Cloud Computing.

- Lack of True Understanding: Generative AI learns statistical patterns; it doesn’t “understand” concepts, emotions, or ethics in a human-like way.

- Control & Predictability: Controlling the output of generative models can be challenging; they sometimes produce unexpected or undesirable results.

Risks & Guardrails

- Misinformation & Deepfakes: The ability to generate realistic but fake text, images, and videos poses significant risks for misinformation, propaganda, and fraud.

- Bias Amplification: If trained on biased data, generative AI can perpetuate and amplify stereotypes, creating harmful content. Strong guardrails and bias detection are crucial.

- Copyright & Ownership: Questions arise about the copyright of AI-generated content and the ethics of using copyrighted material in training data sets.

- Job Displacement: Automation of creative tasks could lead to job displacement in certain sectors.

- Hallucinations: LLMs can generate plausible-sounding but factually incorrect information, requiring grounding and human-in-the-loop verification.

- Guardrails: Robust governance, ethical guidelines, content provenance tracking, human-in-the-loop review, and strong security measures are essential.

What to Do Next / Practical Guidance

Engaging with Generative AI requires a balance of excitement and caution.

- Now (Experiment & Explore):

- Use Generative Tools: Experiment with publicly available Generative AI tools (e.g., ChatGPT, Midjourney) to understand their capabilities and limitations firsthand.

- Focus on Prompts: Learn the art of “prompt engineering” – how to effectively communicate with generative AI to get desired results.

- Understand the “Why”: Recognize that these tools are pattern-matchers, not sentient beings.

- Metrics to Watch: How creative are the outputs? How much effort does it take to get a good result?

- Next (Pilot & Integrate):

- Identify Use Cases: Look for specific tasks in your work or business where Generative AI could automate content creation, accelerate design, or assist with research.

- Start Small: Implement pilot projects with clear objectives and guardrails.

- Human-in-the-Loop: Design workflows where humans review, refine, and provide context for AI-generated content, ensuring quality and ethical compliance.

- Data Ethics: Pay close attention to the ethical implications of the data used for training and the content generated.

- Metrics to Watch: Productivity gains, cost savings, content quality, and user satisfaction.

- Later (Govern & Innovate Responsibly):

- Develop Generative AI Policies: Establish clear governance policies for the ethical use, security, and compliance of Generative AI within your organization.

- Continuous Monitoring: Implement observability and monitoring systems to track the performance and potential risks (e.g., bias, misinformation) of deployed generative models.

- Invest in Auditing & Provenance: Explore tools for auditing AI-generated content and tracking its origin to combat misinformation.

- Foster Critical Thinking: Promote education and critical thinking skills to help individuals discern AI-generated content from human-created content.

- Metrics to Watch: Long-term ROI, mitigation of risks, ethical compliance, and the positive societal impact.

Common Misconceptions

- “Generative AI is conscious or truly creative”: It learns and recombines patterns; it doesn’t possess consciousness or human-like subjective creativity.

- “Generative AI always produces factual content”: LLMs can “hallucinate” or generate incorrect information, especially when asked about things outside their training data or context.

- “Generative AI will replace all human creators”: It’s more likely to act as a powerful tool that augments human creativity and productivity, transforming roles rather than eliminating them entirely.

- “Generative AI is only for text and images”: It can create audio, video, code, molecular structures, and more.

- “All generative AI is the same”: Different models (LLMs, GANs, Diffusion) have different strengths and are better suited for different types of content generation.

Conclusion

Generative AI marks a profound shift in the capabilities of Artificial Intelligence, enabling machines to create new content rather than just predict. By Generative AI Explained: 5 Ways AI Creates New Content from learning patterns to leveraging LLMs, GANs, and Diffusion Models, we see how AI is becoming a powerful creative force. This revolution promises unprecedented productivity and innovation across industries. However, it also introduces significant risks related to misinformation, bias, and ethical constraints. By embracing robust governance, implementing strong guardrails, and fostering human-in-the-loop collaboration, we can harness the transformative power of Generative AI to build a future where machines augment human creativity responsibly and beneficially.