Table of Contents

Introduction

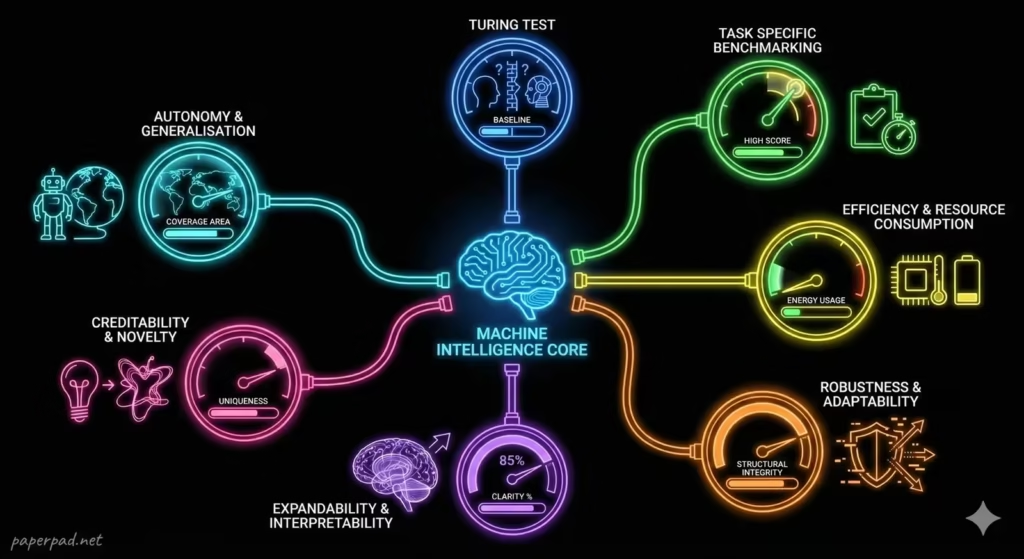

“How Do We Measure AI Intelligence?” Today, with the rapid advancement of AI, particularly in areas like AI Learning and Deep Learning, our methods for Measuring AI Intelligence have become much more sophisticated, often involving diverse benchmarking tasks and a focus on specific capabilities. Understanding these evaluation frameworks is critical for accurately assessing AI’s true progress, its autonomy, and its readiness for real-world deployment, whether in high-tech labs or in applications supporting emerging markets.

Core Concepts

AI Intelligence refers to the capabilities demonstrated by AI systems that mimic or exceed aspects of human cognitive functions. Measuring it involves assessing these capabilities against defined criteria.

Here are 7 Ways We Test AI (and the concepts behind them):

- The Turing Test (Human-like Conversation):

- Definition: Proposed by Alan Turing in 1950, this test assesses a machine’s ability to exhibit intelligent behavior equivalent to, or indistinguishable from, that of a human. A human interrogator converses with both a human and a machine via text, and if they cannot reliably tell which is which, the machine passes.

- Objective: Evaluate an AI’s capacity for natural language understanding and generation, and its ability to simulate human-level intelligence in conversation.

- Analogy: It’s like a blind tasting for intelligence – can you tell the difference between the human-made cake and the machine-made one purely by taste?

- Task-Specific Benchmarking (Performance Metrics):

- Definition: The most common modern approach. AI is evaluated on its performance in specific, well-defined tasks (e.g., image recognition, language translation, game playing). Performance is measured using quantitative metrics like accuracy, precision, recall, F1-score, or win rate.

- Objective: Quantify an AI’s proficiency in a narrow domain.

- Analogy: Giving a student a math test. We measure their intelligence in math by how many problems they get right and how quickly.

- Efficiency & Resource Consumption:

- Definition: Beyond just getting the right answer, how efficiently does the AI do it? This involves measuring latency (how fast it responds), computational cost (CPU/GPU cycles, energy), and memory usage.

- Objective: Assess the practical viability and scalability of an AI system for real-world deployment, especially important under constraints like limited power or bandwidth.

- Analogy: Not just getting the right answer on the math test, but doing it quickly and using minimal scratch paper.

- Robustness & Adaptability:

- Definition: How well does the AI perform when faced with noisy data, adversarial attacks (deliberate attempts to fool it), or slight variations in its environment or input data? Can it adapt to new, unforeseen situations?

- Objective: Evaluate an AI’s resilience and generalizability beyond its training data, crucial for safety and reliability.

- Analogy: Can the student solve the math problem even if it’s phrased slightly differently, or if there’s some distracting noise in the room?

- Explainability & Interpretability (XAI):

- Definition: As discussed with XAI, this measures the AI’s ability to articulate why it made a particular decision in a human-understandable way. While not directly a measure of “intelligence,” it’s a measure of its utility and trustworthiness.

- Objective: Assess an AI’s capacity for transparency, crucial for trust, accountability, and compliance.

- Analogy: The student can not only solve the math problem but can also explain how they arrived at the answer, showing their work and reasoning.

- Creativity & Novelty:

- Definition: Can the AI generate novel and valuable outputs (e.g., art, music, text, designs) that are not simply recombinations of its training data? This is particularly relevant for Generative AI.

- Objective: Explore an AI’s ability to produce original content and contribute to creative tasks.

- Analogy: The student not only solves the math problem but also invents a new, elegant way to solve it.

- Autonomy & Generalization (Beyond Narrow Tasks):

- Definition: How much human intervention is required for the AI to learn, adapt, and operate? Can it apply knowledge learned in one domain to solve problems in a completely different, but related, domain? This moves towards the concept of Artificial General Intelligence (AGI).

- Objective: Assess an AI’s level of independence and its capacity for broad, human-like intelligence.

- Analogy: The student can not only solve math problems but also apply logical thinking to solve problems in science or history, and learn new subjects independently.

These diverse approaches, often used in combination, provide a more holistic picture of an AI’s capabilities.

How It Works

Measuring AI intelligence often involves a structured workflow of testing and evaluation.

- Define the Objective: What aspect of intelligence are we trying to measure? (e.g., “Can the AI translate French to English with human-level fluency?”).

- Select Benchmarking Task & Dataset: Choose a specific task (e.g., translating a set of news articles) and a relevant, diverse dataset for testing. This dataset acts as the context for the evaluation.

- Establish Metrics: Define the quantitative metrics for success (e.g., BLEU score for translation quality, accuracy for image recognition, win rate for games).

- Run the AI System: The AI agent performs the task on the test dataset.

- Collect Results & Evaluate: The system’s outputs are compared against the desired outcomes, and the chosen metrics are calculated. This often involves human-in-the-loop evaluation for qualitative aspects (e.g., human judges for Turing tests or content quality).

- Analyze & Interpret: The results are analyzed to understand the AI’s strengths, weaknesses, and areas for improvement. This might include using XAI techniques to understand why certain errors occurred.

- Iterate & Improve: The insights from the evaluation feed back into the AI development pipeline, leading to model refinement and further testing, forming a continuous feedback loop.

This entire process is often subject to governance and compliance standards, especially in critical applications.

Real-World Examples

Modern AI evaluation is highly practical and often domain-specific.

- Measuring Language Model Fluency (NLP):

- Scenario: Evaluating a Large Language Model’s (LLM) ability to generate coherent and grammatically correct text.

- How it works: LLMs are tested on tasks like text completion, summarization, or answering questions. Metrics include BLEU (Bilingual Evaluation Understudy) for translation quality, ROUGE (Recall-Oriented Understudy for Gisting Evaluation) for summarization, and human evaluation for overall coherence and relevance. The workflow involves feeding prompts, generating responses, and then using automated tools or human judges for benchmarking.

- Emerging Market Context: For LLMs designed for local languages (e.g., Swahili, Hindi), measuring intelligence involves ensuring not just grammatical correctness but also cultural nuance and context-appropriateness, which often requires human-in-the-loop evaluators who are native speakers.

- Autonomous Driving Performance (Robotics/Computer Vision):

- Scenario: Assessing the intelligence of a self-driving car.

- How it works: This involves extensive real-world and simulated testing. Metrics include miles driven without human intervention, collision rates, reaction times, and ability to navigate complex scenarios (e.g., bad weather, unexpected obstacles). The AI agent (the car’s software) demonstrates autonomy within strict guardrails, and its intelligence is measured by its safety and reliability in dynamic environments.

- Emerging Market Context: Measuring self-driving intelligence in areas with less structured roads, unpredictable traffic, varying infrastructure, or informal addressing systems requires adapting benchmarking to these unique constraints, focusing on robustness in diverse and challenging conditions.

- Medical Image Analysis (Computer Vision):

- Scenario: An AI system detects anomalies in medical scans (e.g., identifying tumors in X-rays).

- How it works: The AI’s intelligence is measured by its diagnostic accuracy. Metrics include sensitivity (correctly identifying disease), specificity (correctly identifying healthy cases), and AUC (Area Under the Receiver Operating Characteristic Curve). This is a task-specific benchmarking where the AI’s intelligence is its ability to reliably and accurately assist human clinicians.

- Emerging Market Context: In regions with limited access to specialists, AI’s intelligence in this domain can significantly enhance healthcare. Measuring its intelligence must also account for diverse patient populations and potentially lower-quality imaging equipment, requiring robust adaptability.

Benefits, Trade-offs, and Risks

Benefits

- Track Progress: Provides a standardized way to measure advancements in AI capabilities.

- Guide Development: Helps developers identify AI’s strengths and weaknesses, guiding future research and development.

- Build Trust: Transparent evaluation methods foster confidence in AI systems.

- Inform Policy: Objective measures are essential for policymakers to regulate and govern AI responsibly.

- Resource Allocation: Helps prioritize investment in AI areas with proven intelligence and ROI.

Trade-offs/Limitations

- Narrow vs. General Intelligence: Most current AI excels at narrow tasks, making it hard to measure “general intelligence” in a meaningful way.

- “Gaming” the Test: AI can be specifically trained to pass certain tests without truly understanding the underlying concepts (e.g., an LLM might pass a test but still “hallucinate”).

- Human Bias in Evaluation: Human evaluators can introduce their own biases, especially in subjective tests like the Turing Test.

- Complexity of Metrics: No single metric captures the full spectrum of intelligence. Combining multiple metrics is often necessary but complex.

- Dynamic Nature of AI: AI capabilities are constantly evolving, making established benchmarks quickly obsolete.

Risks & Guardrails

- Misleading Metrics: Over-reliance on a single or flawed metric can lead to a false sense of AI capability or progress, impacting adoption.

- Ethical Concerns in Testing: Testing AI, especially in real-world scenarios (e.g., autonomous vehicles), must adhere to strict ethical guardrails and safety protocols.

- Bias in Benchmarks: If the datasets used for benchmarking are biased, the measured intelligence might not accurately reflect performance across diverse populations.

- Lack of Transparency: Opaque evaluation processes can hinder trust and prevent independent verification of AI claims.

- “Intelligence Washing”: Companies might exaggerate AI capabilities based on narrow test results, leading to unrealistic expectations.

What to Do Next / Practical Guidance

Engaging with AI requires a nuanced understanding of its intelligence.

- Now (Question the Claims):

- Be Skeptical: When you hear claims about AI’s intelligence, ask: “How was that measured?” and “What specific task was it performing?”

- Focus on Specifics: Don’t generalize. An AI that excels at chess isn’t necessarily intelligent at medical diagnosis.

- Understand Metrics: Familiarize yourself with basic performance metrics like accuracy, and understand their limitations.

- Metrics to Watch: How well does an AI perform its intended function?

- Next (Demand Transparency & Context):

- Ask for Benchmarks: For AI products or services, inquire about their benchmarking results and the datasets used for evaluation.

- Consider Context: Always evaluate AI intelligence within its specific domain and the constraints of its environment. An AI that works well in a controlled lab might fail in the unpredictable real world.

- Human-in-the-Loop: For critical applications, ensure there’s always a human-in-the-loop for oversight and validation, regardless of AI’s measured intelligence.

- Metrics to Watch: How robust is the AI to variations? How much autonomy does it have, and under what conditions?

- Later (Contribute to Responsible Evaluation):

- Support Diverse Benchmarks: Advocate for benchmarks that reflect real-world diversity and cover a wider range of capabilities.

- Promote XAI: Encourage the development and use of XAI to understand why AI makes decisions, complementing purely performance-based metrics.

- Participate in Governance: Engage in discussions about standards and governance for AI evaluation and deployment.

- Metrics to Watch: Focus on long-term ROI of AI, its ethical impact, and its ability to generalize and adapt to new challenges.

Common Misconceptions

- “The Turing Test is the ultimate measure of AI intelligence”: It’s a foundational concept but only tests a narrow aspect (conversational human-likeness) and has many limitations.

- “AI is getting smarter like humans”: Current AI is becoming extremely proficient at specific tasks. General intelligence (AGI) is still a distant goal.

- “Higher accuracy always means better AI”: Not always. An AI with 99% accuracy but significant bias against a minority group is not “better” than one with 95% accuracy but fair outcomes.

- “We can build an AI IQ test”: Human intelligence is too complex and multifaceted to be captured by a single test, and the same applies even more so to diverse AI systems.

- “AI intelligence is static”: AI, especially with continuous learning, can evolve and improve, making monitoring and updated benchmarking crucial.

Conclusion

Measuring AI Intelligence is a multifaceted challenge, moving far beyond simple tests to embrace a diverse set of benchmarking tasks and evaluation metrics. While early ideas like the Turing Test laid the groundwork, modern AI assessment focuses on performance, efficiency, robustness, explainability, creativity, and autonomy within specific domains. Understanding these varied approaches is critical for accurately gauging AI’s progress, ensuring its responsible development, and ultimately, building trustworthy systems that deliver genuine value while adhering to ethical guardrails and societal expectations.