Table of Contents

Introduction

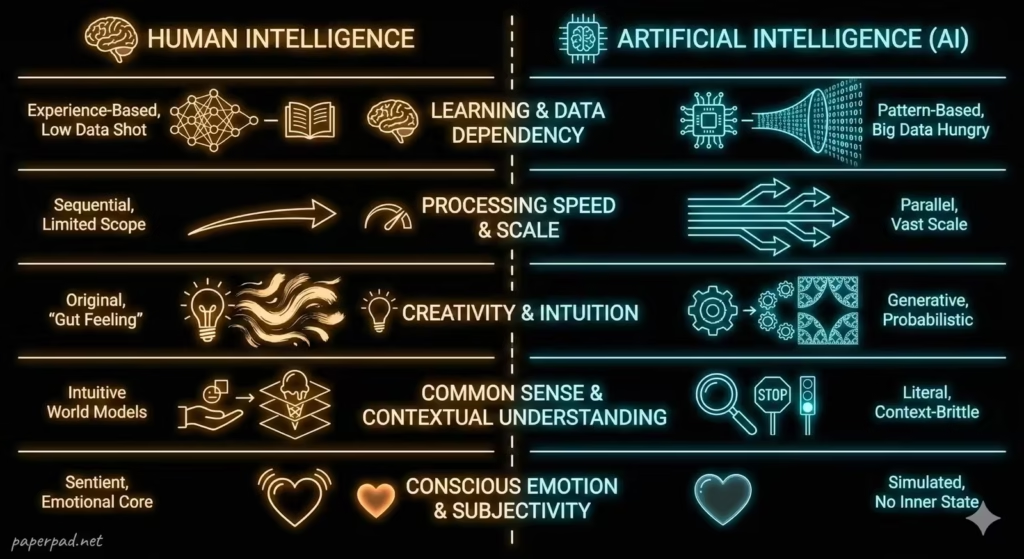

The rise of Artificial Intelligence (AI) has inevitably led to comparisons with our own intellect. When we talk about AI vs. Human Intelligence, we’re not just comparing two different types of brains; we’re exploring two fundamentally distinct ways of thinking, learning, and interacting with the world. While AI can now perform tasks that were once exclusively human domains—like playing chess, translating languages, or even creating art—the underlying mechanisms and capabilities remain profoundly different. Understanding these distinctions is crucial for leveraging AI’s strengths, mitigating its weaknesses, and designing effective human-AI collaboration models, ensuring that technology serves humanity responsibly, whether in advanced research or in supporting communities in emerging markets.

Core Concepts

Let’s break down the fundamental differences between AI Intelligence and Human Intelligence:

- Learning Mechanism & Data Dependency:

- Human Intelligence: Primarily learns through experience, observation, interaction, and reasoning. We can learn from very few examples, generalize broadly, and adapt based on abstract concepts and common sense. Learning is often incremental and iterative, building on prior knowledge in complex ways.

- AI Intelligence: Primarily learns through patterns in vast datasets (Machine Learning) or trial-and-error in defined environments (Reinforcement Learning). It requires significant amounts of data to achieve proficiency and often struggles to generalize beyond its training data without explicit programming or fine-tuning.

- Analogy: A human child learns to identify a cat after seeing just a few examples. An AI might need thousands of labeled cat images to achieve similar accuracy, and even then, might struggle if the cat is in an unusual pose or lighting condition it hasn’t “seen” before.

- Processing Speed & Scale:

- Human Intelligence: Excellent at parallel processing of diverse sensory inputs and complex, abstract thought. However, our processing speed for raw data is relatively slow, and we struggle with extremely large datasets or highly repetitive tasks.

- AI Intelligence: Excels at incredibly fast, high-volume data processing and complex calculations. AI can analyze petabytes of data in seconds, identify minute patterns, and perform repetitive tasks tirelessly without error.

- Analogy: A human can quickly understand the nuanced emotions in a conversation (complex, abstract processing). An AI can process millions of financial transactions in milliseconds (fast, high-volume processing).

- Creativity & Intuition:

- Human Intelligence: Possesses genuine creativity, intuition, and the ability to imagine entirely novel concepts. We can connect disparate ideas, innovate, and make decisions based on gut feelings or incomplete information.

- AI Intelligence: Can generate novel outputs (e.g., art, music, text) but typically does so by learning patterns from existing data and recombining them. Its “creativity” is algorithmic, based on its training, not subjective experience or genuine insight. It lacks intuition and relies on explicit algorithms and data.

- Analogy: A human artist can conceive of a new style of painting never seen before. An AI artist can generate stunning works in existing styles or combine them, but the spark of truly novel artistic vision remains human.

- Common Sense & Contextual Understanding:

- Human Intelligence: Possesses vast common sense knowledge about how the world works, enabling us to understand nuanced situations, adapt to unexpected events, and infer meaning from limited information. We understand the physical and social context.

- AI Intelligence: Lacks inherent common sense. While it can process language and images, it doesn’t “understand” the world in the way humans do. It operates based on statistical correlations and patterns learned from data, making it brittle when faced with situations outside its training context.

- Analogy: A human knows that if you push a glass off a table, it will fall and likely break. An AI might predict the glass will fall based on physics simulations, but it doesn’t “know” the consequence of breakage in the same intuitive, common-sense way.

- Consciousness, Emotion & Subjectivity:

- Human Intelligence: Is inextricably linked to consciousness, self-awareness, emotions, and subjective experience. These qualitative aspects drive our motivations, ethical reasoning, and understanding of purpose.

- AI Intelligence: Does not possess consciousness, self-awareness, emotions, or subjective experience. It simulates intelligence through computation. Its “goals” are programmed objectives, not internally motivated desires. While it can recognize and even simulate emotions, it doesn’t feel them.

- Analogy: A human can feel joy, sorrow, and empathy. An AI can analyze facial expressions and speech patterns to predict human emotions, or generate text that expresses emotion, but it does not experience those emotions itself.

These core differences highlight that AI and human intelligence are complementary, rather than competing, forms of cognition.

How It Works

Understanding the distinct workflow and architecture of human versus AI intelligence helps define their respective roles.

Human Intelligence Workflow:

- Sensory Input: Raw data from our senses (sight, sound, touch, etc.).

- Perception & Interpretation: Our brain processes this, filtering and interpreting based on prior knowledge, context, and emotional state.

- Contextual Integration & Common Sense: Integrate new information with vast amounts of stored common sense and world knowledge.

- Reasoning & Problem Solving: Apply logical, intuitive, and creative thinking to form hypotheses, make decisions, or generate solutions.

- Learning & Adaptation: Continuously update knowledge and modify behavior based on new experiences, often with very few examples.

- Action & Feedback: Perform an action, observe its outcome, and integrate that feedback for future learning. This is a highly flexible, adaptive, and often emotionally driven feedback loop.

AI Intelligence Workflow (simplified):

- Data Input: Structured or unstructured data (images, text, numbers) fed into the system.

- Feature Extraction: Raw data is transformed into numerical features relevant to the algorithm. (In Deep Learning, this is often automated).

- Algorithmic Processing: Data is processed according to predefined algorithms (e.g., neural networks, decision trees) to identify patterns.

- Pattern Recognition & Prediction: Based on learned patterns, the AI makes a prediction or decision.

- Evaluation & Refinement: Performance is measured against specific metrics, and the algorithm is refined based on errors (often requiring human intervention or a reward signal for Reinforcement Learning).

- Output & Action: The AI provides an output (e.g., a classification, a generated text, a recommended action). This workflow is precise, data-driven, and lacks subjective experience.

Real-World Examples

The interplay between AI and human intelligence is evident in many applications.

- Medical Diagnosis:

- AI’s Role: An AI system can analyze vast amounts of medical images (X-rays, MRIs) and patient data, identifying subtle patterns indicative of disease with high accuracy and speed. It excels at processing large-scale, structured information.

- Human’s Role: A human doctor provides the critical context: interpreting the AI’s findings in light of the patient’s individual history, symptoms, lifestyle, and emotional state. They apply common sense, intuition, empathy, and ethical judgment to make a final diagnosis and treatment plan. This is a prime example of human-AI collaboration where the AI acts as a powerful tool for the human expert.

- Autonomous Vehicles:

- AI’s Role: The AI in a self-driving car processes sensor data (cameras, lidar, radar) at lightning speed, identifying objects, predicting movements, and executing precise maneuvers based on pre-programmed rules and learned patterns. It performs complex, repetitive tasks with incredible speed and accuracy.

- Human’s Role: A human driver (or remote operator) provides oversight, especially in complex or unforeseen situations. The human can apply common sense to navigate ambiguous situations (e.g., an unexpected road obstruction not in the AI’s training data) or intervene in emergencies, providing the ultimate guardrail. The human also defines the ethical constraints for the AI.

- Customer Service in Emerging Markets:

- AI’s Role: Chatbots powered by AI can handle a high volume of routine customer inquiries, answer FAQs, and direct customers to relevant resources, often in multiple languages. This improves latency and reduces cost, especially in areas with limited human agent availability.

- Human’s Role: A human customer service agent handles complex, emotionally charged, or unusual requests that require empathy, creative problem-solving, or deep contextual understanding. The human agent interprets subtle cues, builds rapport, and offers personalized solutions beyond the AI’s capabilities, ensuring customer satisfaction and building trust.

Benefits, Trade-offs, and Risks

Benefits of Understanding the Differences

- Optimal Collaboration: Leads to better design of human-AI collaboration systems, leveraging each other’s strengths.

- Responsible AI Deployment: Helps define appropriate roles for AI, ensuring it’s used where it excels and augmented by humans where it falls short.

- Innovation: Inspires the creation of AI that complements human abilities, opening new frontiers.

- Ethical AI Development: Crucial for setting guardrails, ensuring accountability, and addressing bias.

Trade-offs/Limitations

- Over-reliance on AI: Mistaking AI’s task proficiency for genuine human-like intelligence can lead to dangerous over-reliance and errors.

- Misconceptions & Fear: A lack of understanding fuels unrealistic expectations (AGI is here!) or unfounded fears (AI will replace us all!).

- Complexity: The interaction between AI and human intelligence is complex and constantly evolving, requiring continuous learning and adaptation.

Risks & Guardrails

- Deskilling: Over-automation by AI without understanding human roles could lead to a decline in human skills.

- Loss of Accountability: If AI decisions are not understood or overseen by humans, it can lead to a loss of accountability for errors or harms.

- Reinforcement of Bias: AI can perpetuate human biases if not carefully designed and monitored, impacting fairness.

- Ethical Dilemmas: AI’s lack of consciousness and emotion means it cannot inherently make ethical judgments; these must be programmed as guardrails or handled by humans.

- Unintended Consequences: AI’s narrow intelligence can lead to unexpected and potentially harmful outcomes if its constraints and limitations are not well understood by human operators.

What to Do Next / Practical Guidance

Navigating the future means embracing both AI and human intelligence thoughtfully.

- Now (Embrace Complementarity):

- Identify Strengths: Understand what AI does well (speed, data processing, repetitive tasks) and what humans do well (creativity, empathy, common sense, ethical judgment).

- Look for Hybrid Solutions: Think about how AI can augment human capabilities, rather than replace them.

- Educate Yourself: Continuously learn about AI’s advancements and its limitations.

- Metrics to Watch: Focus on how well AI assists humans, rather than just its standalone performance.

- Next (Design for Collaboration):

- Human-Centric Design: When building or adopting AI systems, prioritize human needs and how AI can best support human decision-making.

- Clear Interfaces: Design intuitive interfaces that allow humans to understand AI’s inputs, outputs, and explanations (XAI).

- Define Roles: Clearly delineate responsibilities between human and AI agents in any workflow.

- Training & Change Management: Prepare your workforce for new ways of working with AI, focusing on skill development for human-AI collaboration.

- Metrics to Watch: Evaluate “human productivity with AI,” “error reduction with AI,” and “user satisfaction with AI assistance.”

- Later (Govern & Evolve):

- Establish Ethical Frameworks: Develop robust governance and ethical guardrails for human-AI interaction, especially in high-stakes areas.

- Foster Continuous Learning: Both humans and AI will need to continuously learn and adapt as technology evolves.

- Focus on AGI Research Responsibly: For those in research, pursue Artificial General Intelligence (AGI) with extreme caution and ethical oversight.

- Metrics to Watch: Long-term societal impact, ethical compliance, and the evolution of human skills in an AI-powered world.

Common Misconceptions

- “AI is becoming conscious”: There’s no scientific evidence that current AI possesses consciousness, self-awareness, or subjective experience.

- “AI will replace all human jobs”: AI is more likely to automate tasks, transforming jobs and creating new ones, leading to human-AI collaboration.

- “AI is smarter than humans”: AI can be “smarter” in specific, narrow tasks (e.g., chess), but lacks the broad, general intelligence, common sense, and creativity of humans.

- “AI makes unbiased decisions”: AI can carry and even amplify human biases if its data or design is flawed.

- “Human intelligence is static”: Human intelligence is dynamic, capable of continuous learning, adaptation, and profound creativity, constantly evolving.

Conclusion

The comparison of AI vs. Human Intelligence reveals not a competition, but a profound complementarity. While AI excels at speed, data processing, and repetitive tasks through precise algorithms and vast datasets, human intelligence brings unparalleled creativity, common sense, emotional depth, and ethical reasoning to the table. Understanding these fundamental differences is not just intellectually fascinating; it’s absolutely critical for designing effective human-AI collaboration, setting appropriate guardrails, fostering responsible AI adoption, and ensuring that artificial intelligence serves as a powerful tool to augment, rather than diminish, our shared human future.