Table of Contents

Introduction

Imagine talking to your computer, asking it a question, and getting a perfectly sensible answer. Or having a document automatically summarized, or an email translated instantly. This incredible capability is powered by Natural Language Processing (NLP), a fascinating branch of Artificial Intelligence (AI) focused on enabling computers to understand, interpret, and generate human language. Understanding NLP Decoded: 5 Ways AI Teaches Computers Language is crucial for anyone engaging with modern AI, as it underpins everything from your smartphone’s voice assistant to sophisticated business intelligence tools, transforming how we interact with technology and with each other, especially in multilingual and diverse global contexts.

Core Concepts

Natural Language Processing is a multidisciplinary field combining computer science, artificial intelligence, and computational linguistics. Its primary objective is to process and analyze large amounts of natural language data.

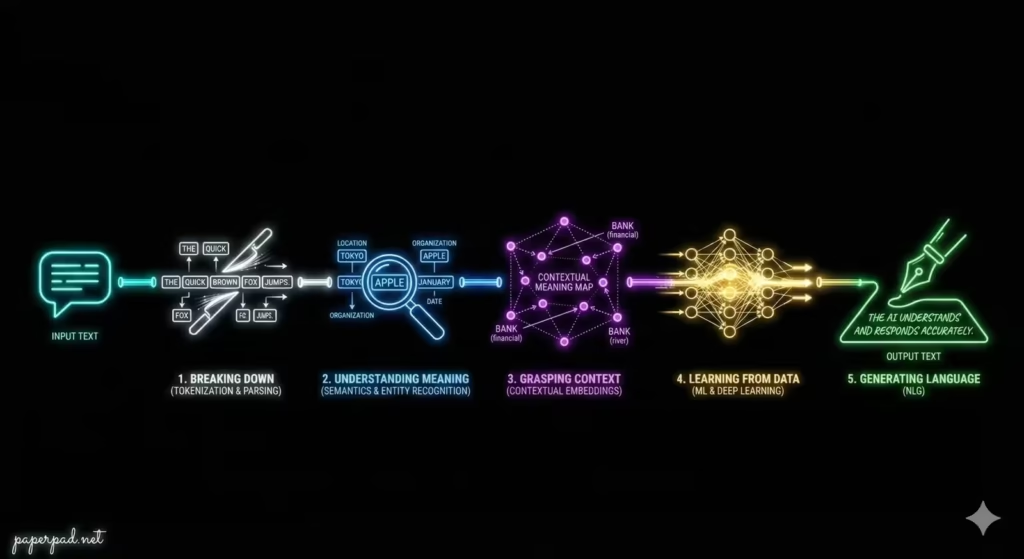

Here are 5 Ways AI Teaches Computers Language:

- Breaking Down the Basics (Tokenization & Parsing):

- Definition: Before a computer can understand a sentence, it needs to break it into manageable pieces. Tokenization splits text into words or phrases (tokens), and Parsing analyzes the grammatical structure of sentences to understand relationships between words.

- Analogy: Imagine trying to understand a foreign language. First, you need to identify individual words (tokenization), then understand how they fit together grammatically to form sentences (parsing). Without these basic steps, comprehension is impossible.

- Understanding Meaning (Semantics & Entity Recognition):

- Definition: Beyond grammar, NLP aims to grasp the meaning of words and sentences. Semantic Analysis tries to understand the meaning of words and how they relate to concepts. Named Entity Recognition (NER) identifies and classifies key information in text, like names of people, organizations, locations, dates, etc.

- Analogy: You hear the word “Apple.” Does it mean the fruit or the company? Semantic analysis helps determine the correct meaning based on context. NER would identify “Tim Cook” as a person and “Apple Inc.” as an organization.

- Grasping Context (Contextual Embeddings & Language Models):

- Definition: Language is highly contextual. The meaning of a word can change based on the surrounding words. Modern NLP uses techniques like contextual embeddings (e.g., from Large Language Models (LLMs) like BERT, GPT) to represent words not just as isolated units, but based on their usage in millions of sentences. This allows AI to understand nuance.

- Analogy: The word “bank” means different things in “river bank” versus “savings bank.” Contextual embeddings allow the AI to understand the appropriate meaning based on the other words in the phrase, much like a human does.

- Learning from Data (Machine Learning & Deep Learning):

- Definition: NLP relies heavily on Machine Learning (ML) and especially Deep Learning (DL). Algorithms are trained on vast datasets of text and speech to learn patterns, grammar rules, and semantic relationships without being explicitly programmed for every single rule.

- Analogy: Instead of giving a computer a dictionary and a grammar textbook (which is impossible for all nuances of human language), you give it millions of books, articles, and conversations, and it learns the rules by observing patterns, much like a child learns language by listening and reading.

- Generating Language (Natural Language Generation – NLG):

- Definition: Once computers can understand language, they can also generate it. Natural Language Generation (NLG) enables AI to produce coherent, grammatically correct, and contextually appropriate text, ranging from simple responses to complex reports.

- Analogy: This is like the computer not just understanding the foreign language, but being able to speak and write it fluently itself, crafting new sentences that convey meaning effectively.

These methods form the sophisticated architecture and workflow that allow computers to engage with human language.

How It Works

An NLP system typically follows a multi-stage pipeline to process language:

- Input: Raw human language (text or speech).

- Preprocessing:

- Tokenization: Breaks text into words/sentences.

- Normalization: Converts text to a standard form (e.g., lowercasing, removing punctuation).

- Part-of-Speech Tagging: Identifies if a word is a noun, verb, adjective, etc.

- Lemmatization/Stemming: Reduces words to their base form (e.g., “running” to “run”).

- Feature Extraction/Embedding:

- Words and sentences are converted into numerical representations (embeddings) that capture their meaning and context. This is crucial for ML models.

- Model Application (Machine Learning/Deep Learning):

- A trained ML/DL algorithm processes these numerical representations to perform a specific task (e.g., sentiment analysis, translation, question answering).

- Output Generation (NLG):

- If the task requires generating text, an NLG component converts the AI’s internal decision back into human-readable language.

- Evaluation & Feedback:

- The output is evaluated (often by humans or specific metrics) and the feedback loop helps refine the model. This entire workflow is designed with specific objectives and operates within defined constraints.

Real-World Examples

NLP is behind many common technologies and is making a significant impact globally.

- Chatbots & Virtual Assistants:

- Scenario: You ask your smartphone assistant “What’s the weather like today?” or interact with a customer service chatbot.

- How it works: NLP enables the AI to understand your spoken or typed query (Natural Language Understanding – NLU), determine your intent, extract key information (e.g., “weather,” “today”), query a database or API, and then generate a natural-sounding response (NLG). This seamless interaction relies on complex algorithms processing language in real-time, often acting as an AI agent with function-calling capabilities.

- Emerging Market Context: Chatbots are revolutionizing access to information and services in regions with low literacy rates or limited access to traditional customer support. NLP allows users to interact in their native language, breaking down language barriers and improving adoption of digital services.

- Machine Translation:

- Scenario: You use Google Translate to understand a foreign language website or communicate with someone speaking a different language.

- How it works: Advanced NLP models, particularly Deep Learning-based Large Language Models (LLMs), learn to map complex sentence structures and meanings from one language to another by analyzing massive parallel texts. They don’t just translate word-for-word but aim to convey the context and nuance.

- Emerging Market Context: Machine translation is vital for global communication, trade, and education, allowing individuals and businesses to overcome language barriers. It enables access to information and markets that were previously inaccessible, driving economic inclusion and ROI.

- Sentiment Analysis for Market Research:

- Scenario: A company wants to understand public opinion about its new product by analyzing social media posts and customer reviews.

- How it works: NLP algorithms process vast amounts of text data, identifying the emotional tone (positive, negative, neutral) and specific opinions expressed about the product. This helps businesses gauge customer satisfaction, identify emerging trends, and respond quickly to issues, providing critical insights for decision-making. The AI’s objective is to extract sentiment, often using a pipeline of text processing and classification.

- Emerging Market Context: Understanding local market sentiment can be challenging due to diverse languages, slang, and cultural expressions. NLP models trained on local data can provide invaluable market intelligence, helping businesses tailor products and services more effectively.

Benefits, Trade-offs, and Risks

Benefits

- Enhanced Communication: Bridges the gap between human language and computer processing, making technology more accessible.

- Automation: Automates repetitive language-based tasks (e.g., customer service, document summarization).

- Information Extraction: Quickly extracts key information and insights from vast amounts of unstructured text.

- Global Reach: Facilitates communication and understanding across language barriers.

- Improved User Experience: Makes interactions with technology more natural and intuitive.

Trade-offs/Limitations

- Contextual Nuance: Human language is incredibly nuanced, with sarcasm, irony, and cultural references that AI still struggles to fully grasp.

- Ambiguity: Words and phrases can have multiple meanings, making accurate interpretation challenging for AI.

- Data Dependency: NLP models require vast amounts of high-quality, diverse training data, which can be expensive and difficult to acquire, especially for less common languages.

- Computational Cost: Processing and training complex NLP models, especially LLMs, can be very resource-intensive, impacting latency and cost.

Risks & Guardrails

- Bias Amplification: If trained on biased text data, NLP models can perpetuate or amplify stereotypes and discriminatory language. Strong guardrails and bias mitigation are crucial.

- Misinformation & Malicious Use: NLP-generated text can be used to create convincing fake news, phishing emails, or propaganda, posing security and ethical risks.

- Privacy Concerns: NLP models often process sensitive personal information, requiring robust privacy safeguards and compliance with data protection regulations.

- Misinterpretation: Errors in NLP can lead to misunderstandings, incorrect decisions, or even harm in critical applications (e.g., medical, legal).

- Hallucinations: Large Language Models (LLMs) can sometimes generate plausible-sounding but factually incorrect information, requiring grounding and human-in-the-loop verification.

What to Do Next / Practical Guidance

Engaging with NLP means understanding its power and its pitfalls.

- Now (Explore & Experience):

- Use NLP Tools: Experiment with voice assistants, translation apps, and grammar checkers to see NLP in action.

- Observe Limitations: Pay attention to instances where NLP tools struggle with your language or context.

- Understand Data’s Role: Recognize that the quality and diversity of training data heavily influence NLP performance.

- Metrics to Watch: How well does the NLP system understand your intent? How natural does its generated response sound?

- Next (Apply & Evaluate):

- Identify Use Cases: Look for specific problems in your work or business where NLP could automate tasks or extract insights (e.g., analyzing customer feedback, categorizing documents).

- Pilot Projects: Start with small, well-defined NLP projects.

- Data Preparation: Prioritize collecting and cleaning relevant text data for your specific NLP task.

- Human-in-the-Loop: For critical applications, design a workflow where human experts review and refine NLP outputs.

- Metrics to Watch: Focus on task-specific metrics like accuracy, precision, recall for classification, and human evaluation for fluency and coherence of generated text.

- Later (Govern & Innovate):

- Ethical NLP Guidelines: Develop internal governance and ethical guardrails for using NLP, especially concerning bias, privacy, and misinformation.

- Continuous Monitoring: Implement robust observability and monitoring systems to track NLP model performance and detect bias drift over time.

- Invest in Language Diversity: Support the development of NLP models for underserved languages, especially in emerging markets, to promote inclusivity and adoption.

- Stay Updated: The field of NLP is evolving rapidly, with new architectures and algorithms emerging constantly.

- Metrics to Watch: Long-term ROI, user satisfaction, compliance with regulations, and the positive societal impact of NLP deployment.

Common Misconceptions

- “NLP means computers think like humans”: NLP enables computers to process language, but it doesn’t equate to human-like understanding, consciousness, or common sense.

- “NLP is always accurate”: NLP models can make errors, especially with ambiguous language, sarcasm, or data outside their training context.

- “One NLP model fits all languages”: While some LLMs are multilingual, performance often varies significantly across languages due to data availability and linguistic complexity.

- “NLP solves all communication problems”: NLP is a powerful tool, but it’s not a silver bullet for communication. Human nuance and context are still paramount.

- “Only linguists or data scientists need to understand NLP”: Anyone interacting with or deploying AI systems that use language will benefit from a basic understanding of NLP’s capabilities and limitations.

Conclusion

Natural Language Processing (NLP) represents a monumental leap in AI’s ability to interact with the human world. By NLP Decoded into its core components – from breaking down grammar to grasping context and generating text – we see how AI learns to understand us. While its algorithms and Machine Learning power incredible applications like chatbots and translation, it’s crucial to acknowledge NLP’s constraints and potential risks, such as bias and misinterpretation. By establishing strong guardrails, ensuring human-in-the-loop oversight, and committing to ethical governance, we can harness NLP’s transformative power to enhance communication, drive global inclusion, and build AI that truly speaks our language.