Table of Contents

Introduction

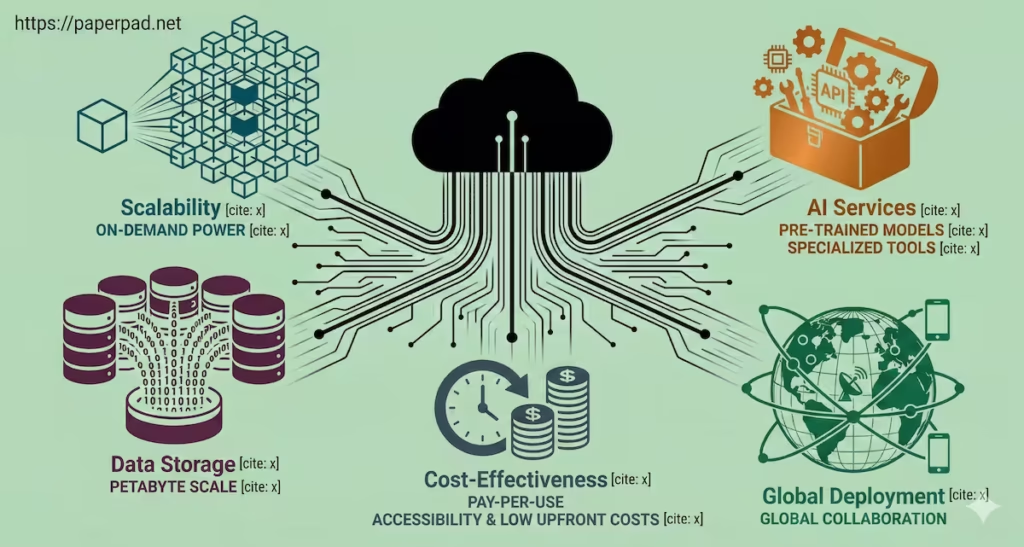

While Edge AI brings intelligence closer to the source, however the real powerhouse behind today’s AI innovation remains Cloud Computing. The question of “What is the Role of Cloud Computing in AI’s Scalability and Power?” it is central to understanding how modern AI models are trained, deployed, and continuously improved. The cloud provides the on-demand, virtually limitless resources that enable AI to push its boundaries, manage immense data sets, and achieve unprecedented scalability. It’s the engine room driving AI’s growth, making sophisticated AI accessible globally and fueling its widespread adoption, even in emerging markets where local compute resources might be limited.

Core Concepts

Cloud Computing refers to the delivery of on-demand computing services—including servers, storage, databases, networking, software, analytics, and intelligence—over the Internet (“the cloud”). For AI, the cloud is not just a storage locker; it’s a dynamic, powerful computing environment.

Here are 5 Powerful Ways Cloud Fuels AI’s Growth:

- Unmatched Scalability & On-Demand Resources:

- Definition: Cloud platforms offer virtually infinite computational resources (CPUs, GPUs, TPUs) that can be scaled up or down instantly. This means AI developers can access immense power for short bursts (like training a massive Deep Learning model) without owning expensive hardware.

- Analogy: Imagine needing a supercomputer for a few hours. In the past, you’d have to buy one. With the cloud, you can rent a supercomputer-equivalent on demand, use it, and then release it, paying only for what you use. This flexibility is key to AI’s scalability.

- Access to Massive Data Storage & Processing:

- Definition: Modern AI thrives on data. The cloud provides petabyte-scale storage solutions and powerful data processing tools (e.g., data lakes, data warehouses, streaming analytics) necessary to manage, clean, and prepare the colossal data sets required for Machine Learning training.

- Analogy: The cloud is like an infinitely expanding library with the fastest librarians and research assistants imaginable. It can store every book ever written and instantly find specific information or patterns within them.

- Cost-Effectiveness & Accessibility:

- Definition: By paying for computing resources as a service (pay-as-you-go), businesses and researchers avoid the massive upfront capital expenditure of building and maintaining their own AI infrastructure. This democratizes access to powerful AI, making it accessible to startups and smaller organizations globally.

- Analogy: Instead of buying a car (capital expenditure) that sits idle most of the time, you use a ride-sharing service (cloud computing), paying only for the journeys you take.

- Specialized AI Services & Tools:

- Definition: Cloud providers offer a growing suite of ready-to-use AI services (e.g., pre-trained Computer Vision APIs, Natural Language Processing (NLP) services, Generative AI models) that can be easily integrated into applications. They also provide comprehensive development tools and frameworks.

- Analogy: The cloud isn’t just raw computing power; it’s a fully stocked kitchen with pre-made sauces, specialized appliances, and expert chefs (pre-trained models and services) you can use without having to build them from scratch.

- Global Deployment & Collaboration:

- Definition: Cloud infrastructure is distributed globally, allowing AI models to be deployed closer to users worldwide, reducing latency and improving user experience. It also fosters seamless collaboration among geographically dispersed AI development teams.

- Analogy: A global orchestra can practice and perform together, even if its members are on different continents, all connected by a shared, high-speed musical platform.

These capabilities make the cloud an indispensable partner in the workflow and architecture of modern AI development and deployment.

How It Works

The workflow of AI in the cloud leverages its distributed and scalable nature.

- Data Ingestion & Storage:

- Raw data (text, images, sensor readings) from various sources (e.g., IoT devices, web applications, databases) is ingested into cloud storage solutions (e.g., data lakes). This can be petabytes of information.

- Data Processing & Preparation:

- Cloud-based data processing tools (e.g., Spark, Hadoop managed services) are used to clean, transform, and prepare the raw data into a suitable format for Machine Learning training. This often involves massive parallel processing.

- Model Training:

- AI developers spin up thousands of virtual machines equipped with powerful GPUs or TPUs from the cloud provider’s pool.

- A Deep Learning model is then trained on the prepared data set. This process can take hours or days, consuming immense computational resources. The cloud’s scalability allows this to happen on demand, rather than waiting for available hardware.

- Model Evaluation & Optimization:

- Trained models are evaluated using cloud-based services, and hyperparameter tuning (optimizing model settings) is performed, often using automated cloud tools.

- Model Deployment & Inference:

- The trained and optimized model is deployed as an API (Application Programming Interface) or integrated into cloud-based applications. When a user requests a prediction (e.g., image recognition, translation), the cloud model performs inference and returns the result. This can be scaled up or down based on demand.

- Monitoring & Management:

- Cloud observability tools continuously monitor the AI model’s performance, latency, and resource usage. This allows for proactive maintenance, updates, and retraining, forming a continuous feedback loop.

This entire pipeline operates within the cloud’s secure and managed environment, adhering to compliance and governance standards.

Real-World Examples

Cloud AI underpins many powerful and accessible AI applications.

- Large Language Models (LLMs) & Generative AI:

- Scenario: Training the next generation of LLMs like ChatGPT or Bard.

- How it works: Developing these models requires processing virtually the entire internet’s worth of text and code, often billions or even trillions of data points. This is only feasible using the massive, on-demand compute power of cloud data centers. The cloud provides the necessary GPUs/TPUs, storage, and networking to enable this unprecedented scale of Deep Learning training. Without the cloud, such models simply couldn’t exist.

- Global Impact: Cloud-trained LLMs are then made available as services, enabling developers globally (including in emerging markets) to build powerful NLP applications without needing their own supercomputers.

- Precision Agriculture & Satellite Imagery Analysis:

- Scenario: Farmers in large agricultural regions (including developing nations) use AI to optimize crop yields and manage resources.

- How it works: Satellites and drones capture vast amounts of imagery of farmlands. This massive data set is uploaded to the cloud. Computer Vision models, trained in the cloud, analyze these images to detect plant health issues, predict yields, or monitor irrigation needs across millions of acres. The cloud’s scalability allows for rapid processing of this immense visual data.

- Emerging Market Context: While data collection might be local (via drones), the cloud’s ability to process and analyze this data at scale, and then deliver actionable insights via simple mobile apps, is transforming agriculture in regions where local compute power is a significant constraint, directly improving food security and ROI.

- Personalized Recommendations at Scale:

- Scenario: Netflix suggesting movies or Amazon recommending products to millions of users simultaneously.

- How it works: These platforms use Machine Learning algorithms (often Deep Learning) that are continuously trained in the cloud on petabytes of user behavior data (views, purchases, clicks). The cloud’s scalability allows these models to be constantly updated and to serve personalized recommendations to millions of users globally with low latency, ensuring a seamless user experience.

Benefits, Trade-offs, and Risks

Benefits

- Unprecedented Scale: Enables training of massive AI models and processing of colossal data sets.

- Cost-Efficiency: Reduces upfront capital expenditure, making AI accessible via pay-as-you-go models.

- Speed of Innovation: Rapid provisioning of resources accelerates experimentation and deployment of new AI solutions.

- Global Reach: Deploy AI applications closer to users worldwide, improving latency and user experience.

- Managed Services: Access to pre-built AI tools and infrastructure management, reducing operational overhead.

Trade-offs/Limitations

- Data Transfer Costs: While processing is cheaper, moving large data sets into and out of the cloud can incur significant network egress cost.

- Vendor Lock-in: Relying heavily on one cloud provider’s specific AI services can make it difficult to switch providers.

- Security & Privacy Concerns: While cloud providers offer robust security, entrusting sensitive data to a third party always carries inherent privacy and security considerations.

- Internet Dependency: Cloud AI inherently requires stable and fast internet connectivity, which can be a constraint in some regions.

Risks & Guardrails

- Data Breaches: Despite robust security, a breach in the cloud could expose vast amounts of sensitive data. Strong guardrails include encryption, access controls, and regular security audits.

- Cost Overruns: Without careful monitoring and resource management, cloud cost can escalate rapidly, impacting ROI.

- Regulatory Compliance: Ensuring compliance with diverse global data residency and privacy laws (e.g., GDPR) when using globally distributed cloud resources can be complex.

- Latency for Edge Devices: For applications requiring instant, real-time responses (like autonomous driving), cloud AI alone is insufficient, necessitating Edge AI.

- Ethical Implications: The immense power of cloud AI (e.g., for surveillance, content generation) demands strong ethical governance and oversight.

What to Do Next / Practical Guidance

Leveraging Cloud Computing is almost essential for serious AI endeavors.

- Now (Assess Your Needs):

- Data Volume: How much data do you have or expect to generate? If it’s large, the cloud is likely your best bet.

- Compute Needs: Do you need immense computational power for training that you don’t own?

- Budget & Expertise: Do you prefer operational cost (cloud) over capital expenditure (on-premise hardware)? Do you have the in-house expertise to manage complex infrastructure?

- Metrics to Watch: Current compute resource utilization, data storage needs, and estimated training times for your AI models.

- Next (Pilot & Plan):

- Start Small: Begin with a pilot project, using cloud resources for a specific AI task (e.g., training a small model, using a pre-trained API).

- Cost Management: Implement strict monitoring and budgeting for cloud usage to control cost.

- Security & Compliance: Work with your cloud provider to ensure your architecture meets your privacy and compliance requirements.

- Hybrid Strategy: Consider a hybrid approach that combines the strengths of cloud AI (training, large-scale inference) with Edge AI (local, real-time inference).

- Metrics to Watch: Cloud cost vs. performance, latency of cloud-based inference, and scalability achieved during peak loads.

- Later (Scale & Govern):

- Cloud-Native AI: Design your AI workflow to fully leverage cloud-native services and tools for optimal efficiency and scalability.

- AI Governance Framework: Establish robust governance policies for data management, model deployment, and ethical use of AI within the cloud environment.

- Continuous Optimization: Regularly review cloud usage and AI model performance to optimize cost, latency, and resource utilization.

- Talent Development: Invest in training your teams on cloud AI platforms and best practices.

- Metrics to Watch: Long-term ROI, global adoption rates, compliance adherence, and overall impact on business objectives.

Common Misconceptions

- “Cloud AI is only for big companies”: Pay-as-you-go models make cloud AI accessible to businesses of all sizes, including startups.

- “Cloud AI is less secure”: Cloud providers invest heavily in security, often exceeding what individual companies can manage, but shared responsibility models require user vigilance.

- “Cloud AI is always expensive”: While large-scale usage can be costly, its flexibility and efficiency often lead to overall cost savings compared to on-premise infrastructure.

- “Cloud AI is just for storage”: The cloud offers a full spectrum of computing services, with powerful processors being central to AI.

- “Cloud AI is the only AI”: It’s a dominant approach, but Edge AI and on-premise solutions also play crucial roles depending on the constraints and objectives.

Conclusion

The Role of Cloud Computing in AI’s Scalability and Power is nothing short of foundational. By providing unmatched scalability, vast data processing capabilities, cost-effectiveness, and specialized tools, the cloud acts as the essential engine fueling the rapid advancements and widespread adoption of Artificial Intelligence. Understanding Cloud AI: 5 Powerful Ways Cloud Fuels AI’s Growth is critical for anyone looking to harness AI’s full potential. While Edge AI addresses specific constraints at the local level, the cloud remains the indispensable backbone for training the most complex models, managing colossal data sets, and deploying intelligent systems globally, driving innovation and shaping the future of AI.