Table of Contents

Introduction

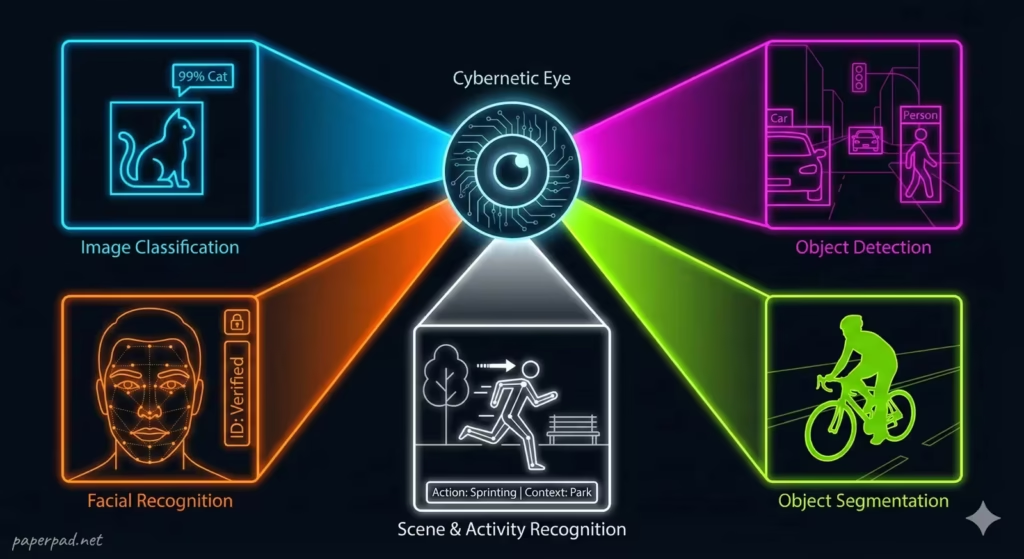

Imagine a machine that can look at a photograph and tell you exactly what’s in it: a cat, a car, a person, or even a specific emotion on a face. This incredible capability is the domain of Computer Vision, a powerful branch of Artificial Intelligence (AI) that teaches computers how to “see” and interpret the world through images and videos. It’s about more than just capturing light; it’s about translating raw visual data (pixels) into meaningful information, allowing AI to understand scenes, recognize objects, and even infer actions.

Core Concepts

Computer Vision is an interdisciplinary field that deals with how computers can gain high-level understanding from digital images or videos. Its ultimate objective is to automate tasks that the human visual system can do.

Here are 5 Powerful Ways AI Sees the World:

- Image Classification (What is it?):

- Definition: The most basic task. Given an image, the AI assigns it to one of several predefined categories (e.g., “cat,” “dog,” “car,” “tree”).

- Analogy: Imagine showing a child a flashcard and asking, “What is this?” The child identifies it as a “cat.” Computer Vision performs this same labeling task.

- Object Detection (Where is it?):

- Definition: More advanced than classification, this involves not only identifying what objects are in an image but also where they are located. The AI draws bounding boxes around each detected object and labels it.

- Analogy: Instead of just saying “there’s a cat,” the child points to the cat in a cluttered room and says, “There’s a cat here!”

- Object Segmentation (Exactly what pixels?):

- Definition: Takes object detection a step further by outlining the exact boundaries (pixel by pixel) of each object in an image. This provides a more precise understanding of the object’s shape and form.

- Analogy: The child not only points to the cat but can precisely trace its outline with their finger, distinguishing it perfectly from the background.

- Facial Recognition & Analysis (Who is it? What are they feeling?):

- Definition: A specialized form of object detection and classification focused on human faces. It can identify individuals, verify identity, and even attempt to infer emotions, age, or gender from facial features.

- Analogy: The child not only sees a person but recognizes their parent and can tell if they are happy or sad.

- Scene Understanding & Activity Recognition (What’s happening?):

- Definition: The most complex task, where the AI interprets the overall context of an image or video, understanding the relationships between objects and people, and recognizing activities or events.

- Analogy: The child watches a video and can describe the entire story unfolding – who is doing what, where, and what the likely outcome will be.

These capabilities are primarily driven by Deep Learning, specifically a type of neural network called Convolutional Neural Networks (CNNs), which are highly effective at processing visual data.

How It Works

A typical Computer Vision workflow involves several stages, often forming a complex pipeline powered by Deep Learning.

- Image/Video Acquisition:

- The raw visual data is captured by cameras, sensors, or loaded from existing databases. This is the input for the AI agent.

- Preprocessing:

- The raw visual data (pixels) is prepared for the AI model. This might involve resizing, cropping, color correction, or noise reduction to standardize the input and reduce constraints.

- Feature Extraction (Deep Learning’s Magic):

- This is where Deep Learning shines. Instead of humans manually identifying features (like edges or corners), a Convolutional Neural Network (CNN) automatically learns to extract hierarchical features from the image.

- The initial layers detect simple features (lines, edges).

- Intermediate layers combine these into more complex shapes (circles, textures).

- Deeper layers combine these shapes into recognizable parts of objects (e.g., an eye, a wheel).

- The final layers combine parts to recognize entire objects or scenes.

- Model Application (Classification, Detection, Segmentation):

- Based on the extracted features, the trained Deep Learning algorithm performs its specific task:

- Classifying the entire image.

- Detecting and localizing multiple objects with bounding boxes.

- Segmenting objects pixel by pixel.

- Based on the extracted features, the trained Deep Learning algorithm performs its specific task:

- Output & Interpretation:

- The AI provides its “understanding” of the visual input (e.g., “Image contains a ‘cat’ with 98% confidence,” “Detected a ‘car’ at coordinates [X,Y] with 90% confidence”).

- This output is often visualized (e.g., bounding boxes on an image) or used to trigger further actions by the AI agent through function-calling.

- Evaluation & Feedback Loop:

- The AI’s performance is rigorously evaluated using specific metrics (e.g., accuracy, precision, recall, Intersection over Union). This feedback loop helps refine the model and its architecture.

Real-World Examples

Computer Vision is transforming industries and daily life globally.

- Autonomous Vehicles:

- Scenario: A self-driving car navigating city streets.

- How it works: Computer Vision is the “eyes” of the autonomous vehicle. It continuously processes live video feeds and sensor data to detect other cars, pedestrians, traffic signs, lane markings, and obstacles. This allows the AI agent to understand its environment, predict movements, and make real-time driving decisions. The workflow involves constant visual input, object detection, and scene understanding under strict safety guardrails.

- Emerging Market Context: While fully autonomous vehicles are still developing, Computer Vision helps in advanced driver-assistance systems (ADAS) in emerging markets, improving safety by detecting hazards or warning drivers of lane departures, especially on less-structured roads.

- Agricultural Monitoring & Crop Health (Emerging Markets):

- Scenario: Farmers in remote areas monitoring crop health or detecting pests without extensive human labor.

- How it works: Drones or mobile phones capture images of fields. Computer Vision algorithms analyze these images to identify signs of disease, nutrient deficiencies, or pest infestations in crops. It can also estimate yield or detect weeds. This allows for precision agriculture, optimizing resource use and increasing output. Even with limited connectivity, images can be captured locally and uploaded when available for processing, providing valuable insights to smallholder farmers and enhancing ROI.

- Quality Control in Manufacturing:

- Scenario: An assembly line needs to quickly inspect thousands of products for defects.

- How it works: High-speed cameras capture images of products as they pass. Computer Vision algorithms are trained to recognize acceptable products versus those with defects (e.g., scratches, missing parts, incorrect labels). This automates quality inspection with higher accuracy and speed than human inspectors, reducing waste and ensuring product consistency. The AI’s objective is to maintain quality, acting as a tireless monitoring agent.

- Medical Image Analysis:

- Scenario: AI assisting radiologists in detecting anomalies in X-rays, MRIs, or CT scans.

- How it works: Computer Vision models analyze complex medical images to identify subtle indicators of diseases like cancer, pneumonia, or fractures. They can highlight suspicious areas for human review, improving diagnostic accuracy and speed. The AI agent here acts as a powerful tool for human experts, adhering to strict privacy and compliance guardrails.

Benefits, Trade-offs, and Risks

Benefits

- Automation of Visual Tasks: Automates repetitive and time-consuming visual inspections, surveillance, and data entry.

- Enhanced Accuracy & Speed: Often surpasses human capabilities in speed and consistency for specific visual tasks.

- New Capabilities: Enables technologies like self-driving cars, facial recognition, and augmented reality.

- Data Insights: Extracts valuable quantitative data from visual information that was previously qualitative or inaccessible.

- Safety Improvement: Can enhance safety in dangerous environments (e.g., industrial inspection, hazardous material handling).

Trade-offs/Limitations

- Data Hunger: Requires massive amounts of labeled image/video data for training, which is expensive and time-consuming to acquire.

- Computational Cost: Training and running complex CNNs require significant processing power (GPUs), leading to high cost and energy consumption.

- Contextual Blindness: Can struggle with understanding the broader context of a scene or handling situations outside its training data (constraints).

- Vulnerability to Adversarial Attacks: Computer Vision models can be fooled by subtle, imperceptible changes to images, posing security risks.

Risks & Guardrails

- Bias & Discrimination: If trained on biased datasets, Computer Vision systems can exhibit bias (e.g., misidentifying faces of certain demographics), leading to unfair outcomes. Strong guardrails and diverse data are crucial.

- Privacy Invasion: Facial recognition and surveillance technologies raise significant privacy concerns. Strict governance and compliance are essential.

- Safety Failures: In critical applications like autonomous vehicles, errors in Computer Vision can have life-threatening consequences. Rigorous testing and human-in-the-loop oversight are non-negotiable.

- Misinformation & Deepfakes: Advanced Computer Vision can be used to manipulate images and videos, creating convincing fake content with potential for misuse.

- Ethical Dilemmas: The deployment of Computer Vision in areas like policing or social credit systems raises profound ethical questions about surveillance and individual liberties.

What to Do Next / Practical Guidance

Engaging with Computer Vision requires a keen eye on its capabilities and ethical implications.

- Now (Observe & Learn):

- Identify Applications: Notice where Computer Vision is already at work in your daily life (phone camera features, online photo tagging, security cameras).

- Understand Its “Eyes”: Recognize that AI “sees” differently than humans—it’s pixel-based pattern recognition.

- Question Its Limits: Consider scenarios where a Computer Vision system might fail or be fooled.

- Metrics to Watch: How accurate is its classification? How well does it detect objects in different lighting?

- Next (Experiment & Plan):

- Identify Use Cases: Look for problems in your business or field that could benefit from automated visual analysis (e.g., inventory management, defect detection, security monitoring).

- Pilot Projects: Start with small, well-defined Computer Vision projects to test feasibility and ROI.

- Data Strategy: Plan for data collection, annotation (labeling), and management—this is often the biggest constraint.

- Ethical Considerations: Before deployment, thoroughly assess potential biases, privacy implications, and safety risks.

- Metrics to Watch: Focus on task-specific accuracy, precision, recall, and latency of the visual processing.

- Later (Govern & Innovate):

- Develop Governance Frameworks: Establish clear policies for the ethical and responsible use of Computer Vision technologies, especially in public spaces or critical applications.

- Continuous Monitoring: Implement robust observability and monitoring systems to track model performance, detect bias drift, and ensure safety in production.

- Invest in XAI: Explore Explainable AI (XAI) techniques to understand why Computer Vision models make certain decisions, crucial for trust and debugging.

- Foster Human-AI Collaboration: Design systems where humans provide context and oversight, leveraging AI’s speed for visual analysis.

- Metrics to Watch: Long-term ROI, compliance with regulations, public trust, and the overall positive societal impact.

Common Misconceptions

- “Computer Vision means true understanding”: AI “sees” by recognizing patterns in pixels, not by understanding the world in the human sense of meaning or purpose.

- “It’s easy to train a Computer Vision model”: Training high-performing models requires massive, diverse, and well-labeled datasets, along with significant computational resources.

- “Computer Vision is foolproof”: CV models can be sensitive to lighting, angles, occlusions, and can be tricked by adversarial attacks.

- “Facial recognition is always accurate”: Accuracy varies significantly across demographics and conditions, often exhibiting bias.

- “AI cameras are just like human eyes”: AI cameras are more like very fast, very precise pattern detectors that lack human common sense and contextual understanding.

Conclusion

Computer Vision is a transformative field that empowers AI to “see” and interpret the visual world, leveraging Deep Learning to achieve remarkable feats in image classification, object detection, and scene understanding. Understanding Computer Vision: 5 Powerful Ways AI Sees the World highlights its immense potential, from enabling autonomous vehicles to revolutionizing agriculture in emerging markets. However, its development and deployment demand meticulous attention to constraints, such as data quality and computational cost, and robust guardrails to address critical risks like bias, privacy, and safety. By embracing responsible governance and fostering human-AI collaboration, we can ensure that AI’s powerful visual capabilities serve to augment human perception and build a safer, more efficient, and more insightful future.