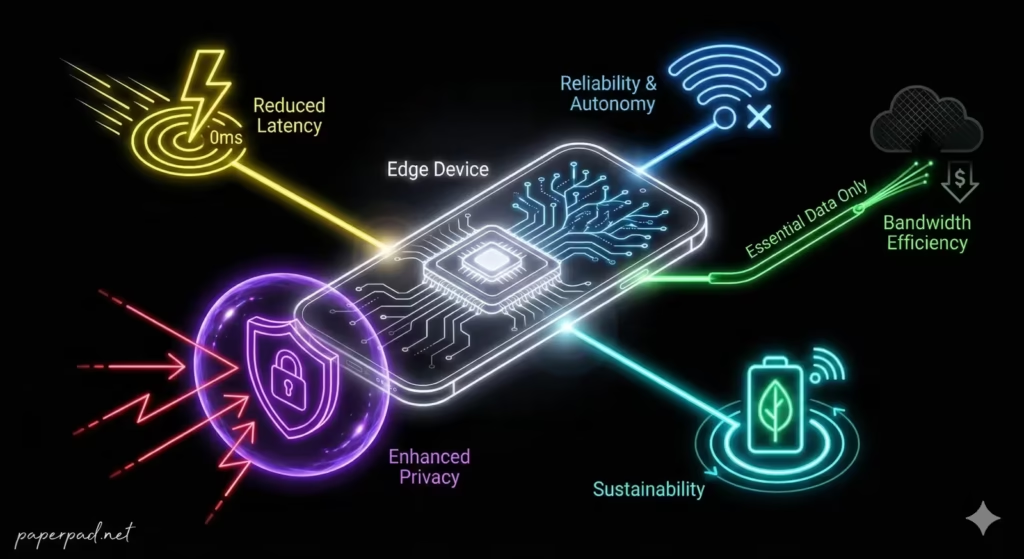

- Edge AI means running AI processing directly on local devices (“the edge”) rather than in a central cloud server.

- It’s crucial for speed, privacy, reliability, and efficiency in many real-world applications.

- Key benefits include reduced latency, lower cost, enhanced security, and improved autonomy.

- Edge AI is essential for devices with limited connectivity or strict data handling constraints.

- Understanding Edge AI is vital for designing intelligent systems that are responsive, secure, and sustainable.

Table of Contents

Introduction

In the world of Artificial Intelligence (AI), much of the data processing traditionally happens on powerful cloud data centers. However, there’s a growing demand to bring AI capabilities much closer to where the data is actually generated: right on the device itself. This is the essence of Edge AI instead of sending all data to the cloud for processing, data is processed locally on your smartphone, a smart camera, a factory sensor, drone or the device itself. This shift is not just a technical trend; it’s a fundamental change in how intelligent systems are designed and deployed, unlocking new possibilities, especially in environments with limited connectivity to cloud or strict privacy constraints.

Core Concepts

Edge AI refers to the deployment of AI algorithms and models directly onto local devices (the “edge”) rather than relying solely on cloud-based servers for processing. The “edge” can be anything from a smartphone or smart speaker to an industrial sensor, a security camera, or a robotic arm.

Here are 5 Powerful Reasons AI Moves to the Source:

- Reduced Latency & Real-time Responsiveness:

- Definition: Processing data locally eliminates the time delay (latency) involved in sending data to the cloud and waiting for a response. This is critical for applications that require immediate action.

- Analogy: Imagine a self-driving car. It can’t afford a delay of even a fraction of a second while its AI “thinks” in the cloud. It needs to react instantly, right where the action is happening. Edge AI provides this instant decision-making.

- Enhanced Privacy & Security:

- Definition: By processing data locally, sensitive information often doesn’t need to leave the device. This significantly reduces the risk of data breaches during transmission or storage in central servers, improving privacy and security.

- Analogy: Instead of sending all your personal diary entries to a public library for analysis, you keep your diary on your desk and analyze it yourself. Your personal information stays private.

- Improved Reliability & Autonomy:

- Definition: Edge AI systems can operate independently, even without a constant internet connection. This makes them more reliable in remote areas, during network outages, or in mission-critical applications where continuous connectivity cannot be guaranteed.

- Analogy: A soldier in the field needs their equipment to work regardless of network availability. Edge AI ensures devices can function autonomously in challenging environments.

- Lower Cost & Bandwidth1 Usage:

- Definition: Sending large volumes of data to the cloud for processing is expensive in terms of bandwidth and cloud computing resources. Edge AI reduces these costs by only sending essential results or aggregated data to the cloud, if at all.

- Analogy: Instead of mailing every single piece of scrap paper to a central recycling plant, you sort and process your recycling at home, only sending the final, condensed product. This saves on shipping costs and effort.

- Efficient Resource Utilization & Sustainability:

- Definition: Edge devices can often perform specific AI tasks with lower power consumption than continuous cloud communication. Furthermore, by processing only relevant data, it avoids unnecessary data transfer and storage, contributing to more sustainable AI operations.

- Analogy: Rather than keeping a massive, energy-hungry supercomputer running 24/7 for all tasks, you use smaller, specialized machines closer to the source for specific jobs, optimizing energy use.

These reasons highlight why the workflow and architecture of many AI systems are shifting towards distributed intelligence, bringing AI’s decision-making capabilities closer to the source of information.

How It Works

The workflow for Edge AI differs from traditional cloud-centric AI in its deployment phase.

- Model Training (Cloud/Data Center):

- Complex AI models (e.g., Deep Learning models for Computer Vision or NLP) are still typically trained in powerful cloud data centers using massive data sets. This is because training requires immense computational power.

- Model Optimization & Compression:

- Once trained, the large, complex model is often optimized and compressed to run efficiently on resource-constrained edge devices. This might involve techniques like “model quantization” or “pruning.”

- Deployment to the Edge:

- The optimized AI model is then deployed directly onto the local device (the “edge device”). This device has specialized hardware (e.g., AI accelerators, powerful CPUs/GPUs, dedicated NPUs) capable of running the model.

- Local Inference:

- The edge device continuously collects data from its sensors (e.g., camera, microphone, temperature sensor).

- The AI model on the device processes this data locally, performing inference (making predictions or decisions) in real-time.

- Action or Selective Reporting:

- Based on the inference, the edge device can take immediate action (e.g., a robot arm grasps an object, a smart camera triggers an alarm).

- Only relevant results, aggregated data, or critical events might be sent to the cloud for further analysis or storage, rather than all raw data.

- Monitoring & Update:

- The edge device’s performance can be monitored (often remotely), and the AI model can be updated or retrained (e.g., via over-the-air updates) based on new data or changing requirements, forming a feedback loop.

This architecture prioritizes local processing while maintaining connection to a broader system.

Real-World Examples

Edge AI is already powering many innovative solutions across industries.

- Smart Security Cameras:

- Scenario: A security camera detects an intruder in a remote warehouse or a home.

- How it works: Instead of streaming all video footage to the cloud for analysis, an Edge AI model on the camera itself processes the video in real-time. It can detect human figures, distinguish them from animals, or identify suspicious behavior. It only sends an alert (and perhaps a short clip) to the owner or security center when an event of interest occurs. This drastically reduces bandwidth usage, ensures privacy by keeping most footage local, and provides instant alerts with minimal latency.

- Emerging Market Context: In areas with unreliable internet or high data costs, Edge AI cameras are invaluable. They provide local real-time security without requiring constant, expensive data transmission, improving security and autonomy for local businesses and communities.

- Industrial IoT & Predictive Maintenance:

- Scenario: Sensors on factory machinery predict when a component is about to fail.

- How it works: Edge AI models embedded in industrial sensors or gateways continuously analyze data streams (vibrations, temperature, pressure) from machinery. They can detect anomalies indicative of impending failure and trigger maintenance alerts immediately, preventing costly downtime. This local processing ensures real-time analysis, even in harsh environments, and reduces the need to send all raw sensor data to the cloud, lowering cost and improving reliability.

- Emerging Market Context: For factories or agricultural equipment in regions with limited connectivity, Edge AI enables vital predictive maintenance, reducing operational costs and improving equipment uptime, directly impacting ROI and productivity.

- AI-Powered Medical Devices:

- Scenario: A wearable device monitors a patient’s heart rhythm for irregularities and alerts them instantly.

- How it works: An Edge AI model on the wearable continuously analyzes physiological data. If it detects a dangerous arrhythmia, it can alert the patient or emergency services immediately, without waiting for cloud processing. This ensures critical, low-latency intervention and keeps sensitive patient data local, enhancing privacy and security.

- Emerging Market Context: Wearable Edge AI devices can provide crucial health monitoring in remote areas lacking immediate access to medical facilities, empowering individuals with early detection capabilities.

Benefits, Trade-offs, and Risks

Benefits

- Speed: Near real-time decision-making due to minimal latency.

- Privacy: Sensitive data stays on the device, reducing exposure and enhancing security.

- Reliability: Operates independently of cloud connectivity, increasing autonomy.

- Cost Efficiency: Reduces bandwidth usage and cloud processing cost.

- Sustainability: Lower energy consumption for specific tasks compared to constant cloud communication.

Trade-offs/Limitations

- Limited Compute Resources: Edge devices have less processing power, memory, and storage than cloud servers, requiring highly optimized AI models. This is a significant constraint.

- Model Complexity: Deploying complex Deep Learning models on edge devices can be challenging, often requiring specialized hardware or model compression techniques.

- Update & Maintenance: Managing and updating AI models across a large fleet of distributed edge devices can be complex.

- Training Still in Cloud: Edge devices typically don’t train complex models; they only run pre-trained ones.

Risks & Guardrails

- Security Vulnerabilities: Edge devices can be physically compromised or targeted by cyberattacks, requiring robust security measures.

- Data Silos: While good for privacy, too much local processing without aggregation can lead to missed broader insights.

- Bias Drift: AI models on edge devices might drift in performance if not regularly updated with fresh, diverse data, leading to bias issues.

- Ethical Concerns: Edge AI in surveillance or monitoring applications can raise privacy and ethical questions if not governed by strong guardrails and compliance.

- Resource Management: Ensuring efficient battery life and processing power on edge devices while running AI models is a constant challenge.

What to Do Next / Practical Guidance

Considering Edge AI for your applications requires strategic planning.

- Now (Assess Needs):

- Prioritize Latency/Privacy/Reliability: If your application absolutely needs real-time responses, offline capability, or strict data privacy, Edge AI should be a strong consideration.

- Understand Your Data: How much data is generated? How sensitive is it? How often does it need to be processed?

- Evaluate Connectivity: Is your deployment environment characterized by intermittent or expensive internet access?

- Metrics to Watch: Current latency of your cloud-based system, cost of data transfer, and criticality of offline operation.

- Next (Pilot & Optimize):

- Identify Edge-Capable Hardware: Explore specialized edge devices (e.g., NVIDIA Jetson, Google Coral, dedicated NPUs in smartphones) for your AI tasks.

- Model Optimization: Work with data scientists to optimize and compress your AI models to run efficiently on selected edge hardware.

- Security by Design: Implement strong security measures from the start, as edge devices can be more vulnerable.

- Pilot Project: Deploy a small-scale Edge AI solution to test its performance, reliability, and ROI in a real-world context.

- Metrics to Watch: On-device latency, power consumption, local processing accuracy, and reduction in cloud data transfer.

- Later (Scale & Govern):

- Scalable Deployment: Plan for managing and updating a fleet of potentially thousands of edge devices, potentially using containerization or remote management tools.

- Hybrid Architectures: Often, the best solution is a hybrid: Edge AI for real-time inference and immediate action, with cloud AI for complex training, global analysis, and long-term storage.

- Ethical Governance: Establish clear governance policies and guardrails for data handling, privacy, and ethical use of Edge AI, especially in sensitive applications.

- Continuous Monitoring: Implement observability tools for monitoring the performance and health of your edge devices and their AI models.

- Metrics to Watch: Long-term ROI, autonomy achieved, security incident rates, and compliance with data regulations.

Common Misconceptions

- “Edge AI replaces the cloud”: Edge AI complements the cloud, offloading specific tasks for efficiency while still leveraging cloud for training and large-scale data aggregation.

- “Edge AI can run any AI model”: Edge devices have limited resources, so models must be optimized and compressed to run effectively.

- “Edge AI is only for remote areas”: While beneficial there, it’s also crucial for high-speed applications (e.g., AR/VR, robotics) and privacy-sensitive data (e.g., smart home devices) even with good connectivity.

- “Edge AI is inherently more secure”: While it reduces data in transit, the physical security of edge devices and their software vulnerabilities must be carefully managed.

- “Edge AI is a single technology”: It’s an architectural approach involving various hardware, software, and optimization techniques.

Conclusion

Edge AI represents a powerful paradigm shift, bringing intelligence closer to the source of data generation. By moving AI processing onto local devices, it addresses critical constraints related to latency, privacy, reliability, and cost, unlocking new possibilities for real-time responsiveness and autonomy. Understanding Edge AI: 5 Powerful Reasons AI Moves to the Source is crucial for designing intelligent systems that are not only efficient and secure but also sustainable and impactful. As AI continues to evolve, Edge AI will play an increasingly vital role in shaping how we interact with the intelligent world around us, driving innovation from smart cities to remote agricultural fields.

- If you love to stream HD videos, download large files and enjoy multiplayer gaming, you may want to consider speed plans of 100 Mbps and above. For all other activities like streaming music, surfing and video conferencing – anything above 25 Mbps should be enough. It all depends on how patient you are with potential buffering and slightly slower speeds when others at home are competing for bandwidth at the same time for their own activities. ↩︎