Table of Contents

Introduction

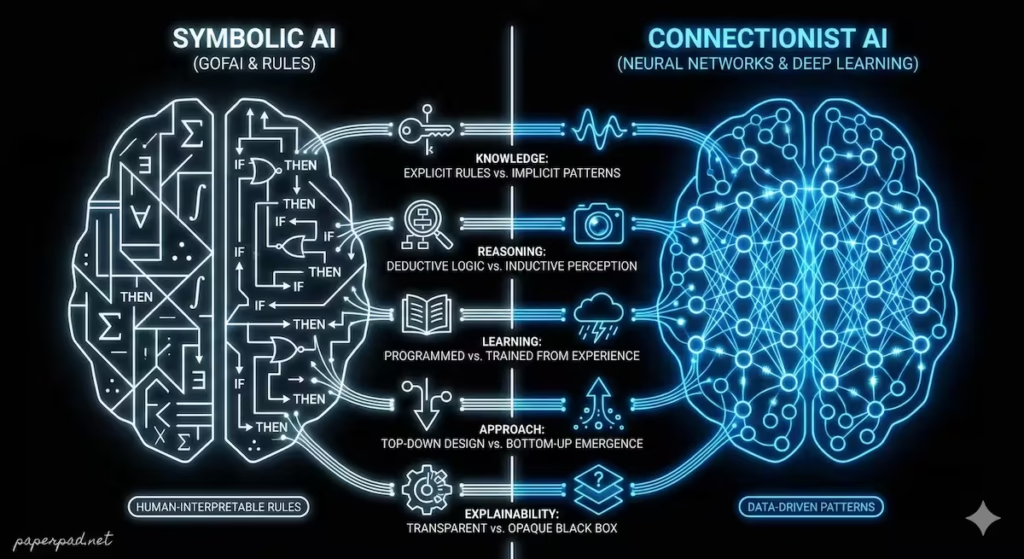

The quest to build intelligent machines has taken many turns over the decades. Fundamentally, researchers have pursued two main philosophies, two distinct “paths to machine intelligence”: Symbolic AI vs. Connectionist AI. While both aim to create intelligent systems, their underlying philosophies, architectures, and workflows are profoundly different. Understanding this historical and conceptual divide is key to grasping the evolution of Artificial Intelligence, why certain approaches dominated at different times, and the strengths and weaknesses of today’s powerful Deep Learning systems. It’s not just an academic debate; it informs how AI is built, what problems it can solve, and how we interact with it, from expert systems in medical diagnosis to the conversational abilities of large language models.

Core Concepts

Let’s break down these two foundational approaches to AI:

1. Symbolic AI (Good Old-Fashioned AI – GOFAI)

- Definition: This approach, dominant in early AI research (1950s-1980s), represents knowledge explicitly using symbols and rules. It attempts to mimic human reasoning by manipulating these symbols according to predefined logical rules.

- How it “thinks”: It operates like a highly structured detective, following a precise set of “if-then” rules to deduce conclusions from facts.

- Analogy: Imagine a meticulously organized library where every book is perfectly cataloged, and there’s a detailed instruction manual (the rules) on how to find any piece of information or draw any logical conclusion.

- Key Characteristics:

- Explicit Knowledge Representation: Knowledge is stored as facts and rules (e.g., “IF (X is a bird) AND (X can fly) THEN (X is a flying bird)”).

- Logical Reasoning: Decisions are made through logical inference and symbolic manipulation.

- Human-Interpretable: The rules and symbols are usually understandable by humans, making it easier to explain decisions.

- “Top-Down” Approach: Programmers define high-level rules and concepts.

2. Connectionist AI (Neural Networks & Deep Learning)

- Definition: This approach, inspired by the structure of the human brain, learns patterns and relationships directly from data. It uses interconnected “nodes” (neurons) that process information in a distributed, parallel fashion.

- How it “thinks”: It operates by adjusting the strength of connections between its “neurons” based on vast amounts of training data, gradually learning to recognize patterns and make predictions without explicit rules.

- Analogy: Imagine a child learning to recognize a cat. They don’t learn “if (has pointy ears) AND (has whiskers) THEN (is a cat).” Instead, they see many examples of cats, and their brain’s connections gradually strengthen to recognize the overall pattern.

- Key Characteristics:

- Implicit Knowledge Representation: Knowledge is stored implicitly in the “weights” (connection strengths) between neurons, not as explicit rules.

- Pattern Recognition: Excels at identifying complex patterns in large datasets, especially unstructured data like images and sound.

- Data-Driven: Requires vast amounts of data to learn effectively.

- “Bottom-Up” Approach: The system learns features and rules from raw data.

The relationship between these two is not strictly hierarchical like AI ⊃ ML ⊃ DL. Instead, they represent different fundamental philosophies that have influenced AI research at various times. Modern AI is heavily dominated by connectionist approaches, especially Deep Learning.

How It Works

The workflow and architecture differ significantly between these two paradigms.

Symbolic AI Workflow (e.g., Expert System):

- Objective: Solve a specific, well-defined problem in a narrow domain (e.g., diagnose a specific illness).

- Knowledge Acquisition: Human experts are interviewed to extract their expertise in the form of facts and rules. This is a crucial human-in-the-loop step.

- Knowledge Base Creation: These facts and rules are formally encoded into a “knowledge base” using symbols (e.g.,

symptom(patient, fever),rule1: IF symptom(X, fever) AND symptom(X, cough) THEN diagnosis(X, flu)). - Inference Engine: A separate program (the “inference engine”) applies logical reasoning (e.g., forward or backward chaining) to the knowledge base and new input data (e.g., patient symptoms) to deduce conclusions.

- Explanation & Decision: The system provides a diagnosis and can often explain its reasoning step-by-step by showing which rules were fired.

- Constraints: Brittle (fails outside its explicit rules), difficult to scale, knowledge acquisition is a bottleneck.

Connectionist AI Workflow (e.g., Deep Learning Image Classifier):

- Objective: Recognize objects in images (e.g., “is this a cat or a dog?”).

- Data Collection: A massive dataset of labeled images (e.g., millions of cat and dog pictures) is gathered.

- Model Architecture: A neural network architecture (e.g., a CNN) is designed.

- Training: The network processes the labeled images, adjusting the “weights” (connection strengths) between its “neurons” iteratively to minimize errors in classification. This is largely an autonomous process once started.

- Inference: For a new, unseen image, the trained network processes it, and the output layer indicates the predicted object (e.g., “cat” with 98% probability).

- Evaluation & Feedback: The model’s performance is measured using metrics like accuracy. If it performs poorly, the architecture or data set might be adjusted, forming a feedback loop.

- Constraints: Requires massive data, computationally intensive, “black box” nature (hard to explain decisions).

Real-World Examples

The history of AI shows a pendulum swing, with current applications heavily favoring connectionist approaches.

- Symbolic AI Example: Mycin (Medical Diagnosis – 1970s)

- Scenario: An early AI system designed to diagnose infectious blood diseases and recommend antibiotic dosages.

- How it worked: Mycin operated as an expert system, using hundreds of “if-then” rules provided by infectious disease specialists. For example:

IF (the infection is primary bacteremia) AND (the site of the culture is one of the sterile sites) AND (the suspected portal of entry is the gastrointestinal tract) THEN (there is a suggestive evidence (.7) that organism is bacteroides). It could explain its reasoning by showing the chain of rules it followed. - Significance: Demonstrated the potential of AI in complex domains and its ability to provide explainable decisions, but was ultimately limited by the difficulty of scaling knowledge acquisition and its brittleness outside its narrow domain.

- Connectionist AI Example: Google Translate (Machine Translation – Modern)

- Scenario: Translating text or speech between dozens of languages in real-time.

- How it works: Modern machine translation uses Deep Learning neural networks (often Transformer architectures) trained on massive data sets of parallel text (the same text in two languages). The network learns implicit patterns and relationships between languages, allowing it to translate entire sentences, capturing context and nuance far better than early rule-based systems. There are no explicit “if this word, then that word” rules.

- Significance: A prime example of connectionist AI’s ability to handle highly complex, ambiguous tasks with impressive accuracy, leveraging vast amounts of data. It performs with high autonomy.

- Emerging Market Context: Combining Approaches for Legal Advice

- Scenario: Providing basic legal guidance to individuals in an emerging market where access to lawyers is limited.

- How it works (Hybrid Approach): A Symbolic AI component might use explicit rules based on local legal codes (e.g., “IF (dispute is about land ownership) AND (value < $X) THEN (use local arbitration process)”). This provides clear, explainable, and compliant advice for common, well-defined cases. For more complex cases or to understand nuanced user queries, a Connectionist AI (like an LLM) could process natural language questions, summarizing relevant precedents or identifying similar cases, acting as an intelligent search tool. The workflow would involve an AI agent orchestrating both, with human-in-the-loop for critical decisions. This combination leverages the strengths of both paradigms under specific constraints.

Benefits, Trade-offs, and Risks

Benefits

- Symbolic AI:

- Explainability: Decisions are often transparent and human-readable, making it easier to audit and build trust.

- Precision: Excels in domains with well-defined rules and logical structures (e.g., mathematics, formal logic).

- Less Data-Hungry: Can work with smaller datasets if rules are well-defined.

- Connectionist AI:

- Pattern Recognition: Excels at finding complex, implicit patterns in large, unstructured data (images, sound, text).

- Adaptability: Can learn and adapt to new data without explicit reprogramming of rules.

- Scalability: Performance often improves with more data and computational power.

- Generalization: Can generalize well to new, unseen examples within its learned domain.

Trade-offs/Limitations

- Symbolic AI:

- Brittleness: Fails catastrophically outside its explicitly programmed knowledge base. Lacks common sense.

- Scalability Issue: Knowledge acquisition (defining all rules) becomes unmanageable for complex, real-world problems.

- Difficulty with Ambiguity: Struggles with nuanced, fuzzy, or ambiguous information.

- Connectionist AI:

- “Black Box” Problem: Often hard to explain why a decision was made, leading to issues with trust and accountability.

- Data Hunger: Requires massive amounts of labeled data, which can be expensive and time-consuming.

- Computational Cost: Training is very resource-intensive, impacting cost and latency.

- Vulnerability to Adversarial Attacks: Can be fooled by subtle, imperceptible changes to input data.

Risks & Guardrails

- Symbolic AI (if used inappropriately):

- Limited Scope: Can lead to oversimplification of complex problems if assumed to cover all context.

- Maintenance Burden: Updating rule bases for dynamic environments is difficult.

- Connectionist AI (if used inappropriately):

- Bias Amplification: Implicit biases in training data can be learned and amplified without explainability or guardrails.

- Hallucinations: Especially with LLMs, generating plausible but incorrect information.

- Lack of Accountability: The “black box” nature can make it difficult to assign responsibility for errors.

- Guardrails for Both: A robust governance framework, human-in-the-loop oversight, and careful evaluation are crucial for both paradigms, especially as they often operate within complex pipelines.

What to Do Next / Practical Guidance

Understanding these two paths helps in choosing the right AI tool for the job.

- Now (Identify the Paradigm):

- Question AI Systems: When you encounter an AI, try to discern if it’s primarily rule-based (Symbolic) or pattern-based (Connectionist). Is it giving you a precise, logical answer, or a nuanced, data-driven one?

- Start with Strengths: For problems with clear rules, consider a symbolic approach for its explainability. For pattern recognition, lean towards connectionist.

- Metrics to Watch: How transparent is the decision-making process? How much data was needed to “learn” the task?

- Next (Consider Hybrid Solutions):

- Combine Strengths: Many modern AI systems are hybrid, leveraging symbolic AI for logical reasoning and guardrails, and connectionist AI for perception and pattern recognition.

- Data vs. Rules: Evaluate your problem: Do you have clean, well-defined rules (Symbolic), or massive amounts of data with implicit patterns (Connectionist)?

- Human-in-the-Loop: For both, maintain human-in-the-loop oversight, especially in critical applications, to provide common sense and ethical context.

- Metrics to Watch: How well does the chosen paradigm fit the problem’s constraints? What is the ROI for each approach?

- Later (Embrace Evolution):

- Stay Updated: The line between these paradigms is blurring with new research (e.g., neuro-symbolic AI).

- Advocate for Explainability: Regardless of the underlying architecture, push for greater transparency and explainability in all AI systems.

- Focus on Governance: Develop strong governance and ethical guardrails for both symbolic and connectionist systems to address their respective risks.

- Metrics to Watch: Long-term scalability, adaptability to new situations, and adherence to ethical principles.

Common Misconceptions

- “Symbolic AI is dead”: While less dominant, symbolic methods are still valuable for tasks requiring precision, logical reasoning, and explainability, and are often integrated into hybrid systems.

- “Connectionist AI has no rules”: It learns implicit rules from data; it doesn’t operate randomly.

- “One is inherently smarter than the other”: They excel at different types of intelligence. Symbolic for logical deduction, connectionist for pattern recognition.

- “AI will eventually merge into one approach”: While hybrid models are growing, the fundamental philosophical differences often remain relevant for problem-solving.

- “Connectionist AI understands what it’s doing”: It recognizes patterns, but its “understanding” is statistical, not based on human-like common sense or contextual understanding.

Conclusion

The historical and ongoing debate between Symbolic AI vs. Connectionist AI illuminates two fundamental paths to machine intelligence. Symbolic AI, with its explicit rules and logical reasoning, offers explainability and precision in well-defined domains. Connectionist AI, driven by Deep Learning and vast datasets, excels at pattern recognition and adaptability in complex, ambiguous environments. By understanding the distinct architectures, workflows, and constraints of these two paradigms, we can better appreciate the evolution of AI, make informed choices about its application, and ultimately build more robust, transparent, and ethically sound intelligent systems that leverage the best of both worlds.

Going next let’s explore Edge AI.